The Science of AI Hallucinations: Identifying H-Neurons in Large Language Models

Автор: SciPulse

Загружено: 2026-03-07

Просмотров: 361

Описание:

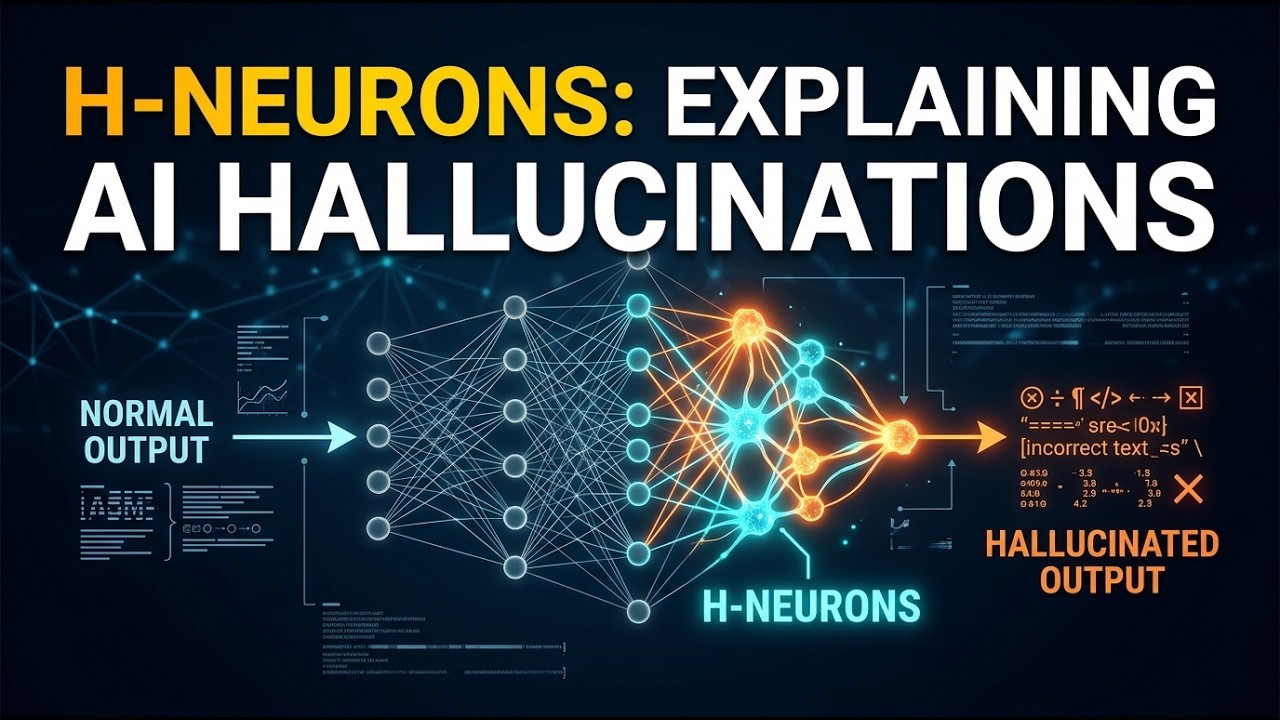

Why do Large Language Models (LLMs) hallucinate? While researchers often examine training data or prompt engineering for answers, new research suggests the cause might lie deep inside the neural circuitry of the models themselves.

In this episode of SciPulse, we explore the research paper “H-Neurons: On the Existence, Impact, and Origin of Hallucination-Associated Neurons in LLMs.” The study identifies an extremely sparse subset of neurons—less than 0.1% of total parameters —that appear strongly associated with generating hallucinated outputs.

Topics Discussed in This Episode:

• The Discovery of H-Neurons — How researchers used the CETT (Contribution of Neurons) metric to isolate specific neurons inside Feed-Forward Networks that signal a hallucination before it occurs

• The Over-Compliance Connection — Why hallucinations may arise from a model’s tendency to prioritize user satisfaction over factual accuracy or safety

• Causal Intervention Experiments — What happens when these neurons are suppressed or amplified, and how they influence susceptibility to misleading prompts or harmful instructions

• Origins During Pre-Training — Evidence suggesting hallucination circuits emerge during the initial pre-training phase rather than later alignment or fine-tuning

• Understanding the Neural Mechanism — Why identifying specific neuron groups moves AI research closer to understanding the internal mechanics of transformer models

• Toward Reliable AI Systems — How targeted interventions could help reduce hallucinations and improve trust in AI systems

This research represents an important shift away from treating AI systems as black boxes and toward understanding their internal computational structure.

Original Research Paper:

“H-Neurons: On the Existence, Impact, and Origin of Hallucination-Associated Neurons in LLMs”

https://arxiv.org/pdf/2512.01797

Educational Disclaimer: This video is an educational overview summarizing key findings from the research paper. It does not replace reading the original study for full technical details and methodology.

#AI #MachineLearning #LLMs #Hallucinations #HNeurons #ArtificialIntelligence #Interpretability #AISafety #DeepLearning #Transformer #SciPulse #AIResearch #NeuralNetworks

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: