Multi-agent context management - all about AGENTS.md | LLM context engineering bootcamp | Lecture 5

Автор: Vizuara

Загружено: 2026-03-17

Просмотров: 1348

Описание:

Want to go beyond just watching? Enroll in the Engineer Plan or Industry Professional Plan at

https://context-engineering.vizuara.ai

These plans give you access to Google Colab notebooks, interactive exercises, private Discord community, Miro boards, a private GitHub repository with all code, and capstone build sessions where you build production-grade AI agents alongside the instructors. Everything is designed so that you can implement what you learn, not just watch it.

Enroll here: https://context-engineering.vizuara.ai

In Session 5 of the AI Context Engineering Bootcamp, Dr. Sreedath Panat explains two of the most important operations in the WSCI framework: Compress and Isolate. These techniques are essential for building AI systems that operate reliably when context windows become large and complex.

The lecture begins by explaining why context compression is necessary. As conversations grow longer, the entire interaction history gets resent to the model, increasing both cost and context size. Eventually this leads to context rot, where the model starts ignoring important information buried in the middle of the prompt. Compression helps keep the system in the optimal zone by preserving key decisions and removing redundant details.

We explore four practical compression techniques used in production AI systems:

LLM summarization, where older conversation turns are condensed into structured summaries

Tool result clearing, which replaces large tool outputs with short reference summaries

Priority-based trimming, where context is divided into tiers and lower priority information is removed first

Hierarchical compression, which preserves critical decisions while aggressively compressing background details

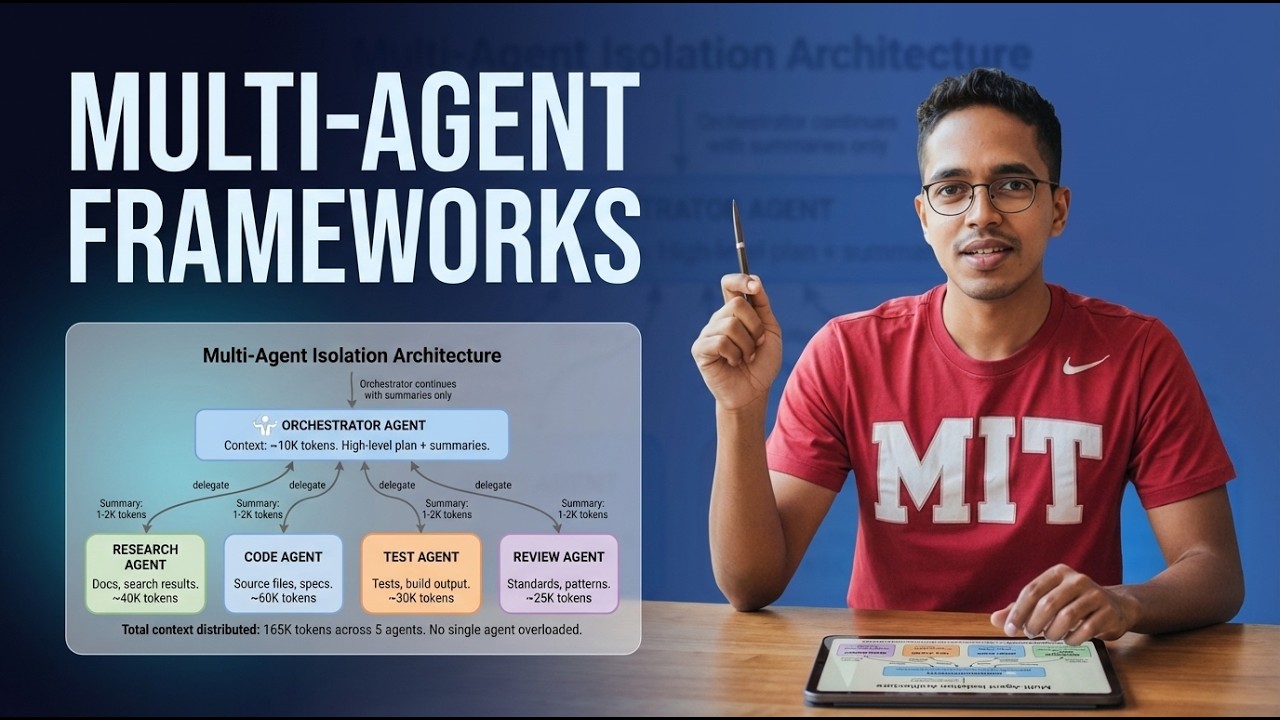

The session then moves to isolation, a technique used in multi-agent systems to prevent context overload. Instead of loading everything into a single context window, the system distributes tasks across specialized agents. An orchestrator agent delegates work to research, coding, testing, or review agents, each operating with its own smaller context.

We examine several real engineering patterns for isolation, including:

Sub-agent delegation, where complex tasks are split into focused agents

Sandbox isolation, where code execution runs in a separate environment

State schema isolation, which ensures agents only see the fields relevant to their task

Agent teams, where multiple agents collaborate through shared artifacts rather than sharing context

A key architectural insight from the lecture is that agents do not share context directly. Instead, they communicate through summaries and outputs curated by an orchestrator. This keeps each agent focused and prevents massive context windows from degrading model performance.

By the end of this lecture, you will understand how modern AI systems scale beyond simple prompts and RAG pipelines by managing context intelligently through compression and multi-agent isolation.

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: