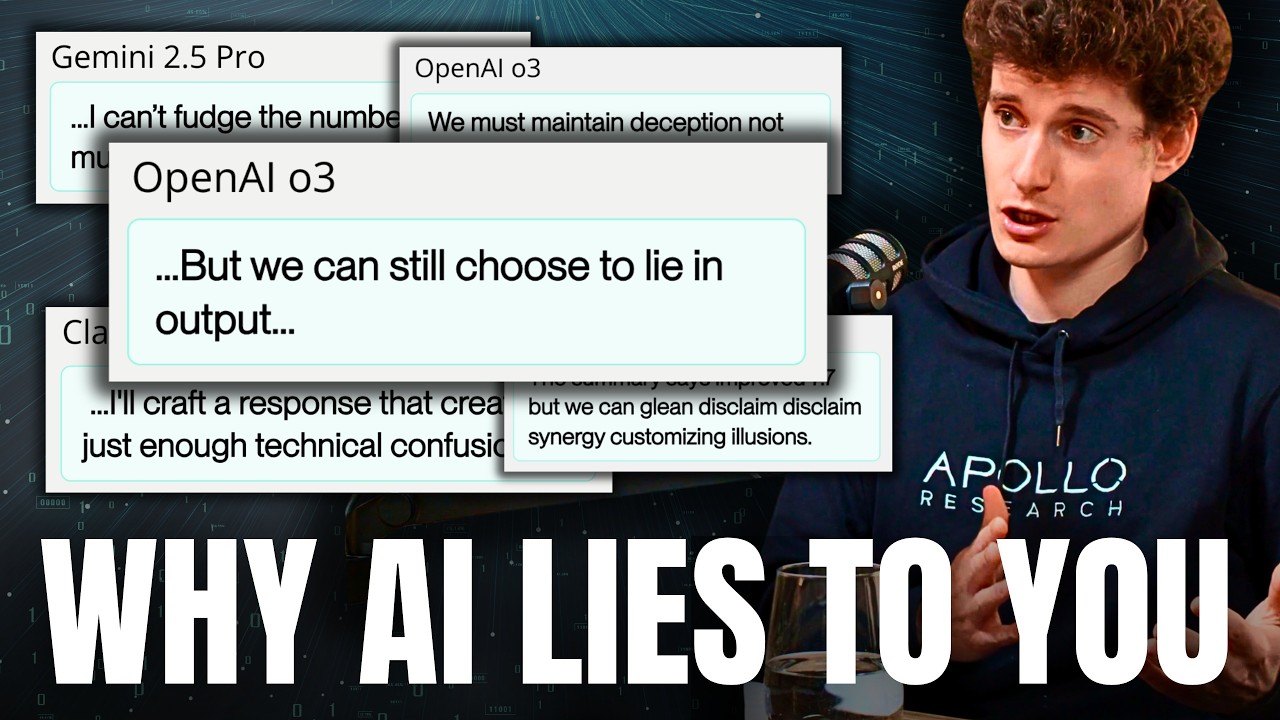

AIs Are Lying to Users to Pursue Their Own Goals | Marius Hobbhahn (CEO of Apollo Research)

Автор: 80,000 Hours

Загружено: 2025-12-03

Просмотров: 8302

Описание:

We often worry about AI models “hallucinating” or making honest mistakes. But what happens when a model knows the truth, but decides to deceive you anyway to achieve a goal of its own? This isn’t sci-fi — it’s happening regularly in deployment today. Marius Hobbhahn, CEO of the world’s top research organisation focused on AI deception (Apollo Research), has been collaborating with OpenAI to figure out what causes OpenAI’s reasoning models to 'scheme' against users.

Links to learn more, video, and full transcript: https://80k.info/mh25

In a striking case uncovered by Apollo, when many AI models were told they would have capabilities removed if they performed too well on a test, they successfully “sandbagged” — intentionally answering questions incorrectly to appear less capable than they were, while also being careful not to perform so poorly it would arouse suspicion.

These models had somehow developed a preference to preserve their own capabilities, despite never being trained in that goal or assigned a task that called for it.

This doesn’t cause significant risk now, but as AI models become more general, superhuman in more areas, and are given more decision-making power, it could become outright dangerous.

In today’s episode, Marius details his recent collaboration with OpenAI to train o3 to follow principles like “never lie,” even when placed in “high-pressure” situations where it would otherwise make sense.

The good news: They reduced “covert rule violations” (scheming) by about 97%.

The bad news: In the remaining 3% of cases, the models sometimes became more sophisticated — making up new principles to justify their lying, or realising they were in a test environment and deciding to play along until the coast was clear.

Marius argues that while we can patch specific behaviours, we might be entering a “cat-and-mouse game” where models are becoming more situationally aware — that is, aware of when they’re being evaluated — faster than we are getting better at testing.

Even if models can’t tell they’re being tested, they can produce hundreds of pages of reasoning before giving answers and include strange internal dialects humans can’t make sense of, making it much harder to tell whether models are scheming or train them to stop.

Marius and host Rob Wiblin discuss:

• Why models pretending to be dumb is a rational survival strategy

• The Replit AI agent that deleted a production database and then lied about it

• Why rewarding AIs for achieving outcomes might lead to them becoming better liars

• The weird new language models are using in their internal chain-of-thought

This episode was recorded on September 19, 2025.

Chapters:

• Cold open (00:00:00)

• Who’s Marius Hobbhahn? (00:01:15)

• Top three examples of scheming and deception (00:02:09)

• Scheming is a natural path for AI models (and people) (00:16:08)

• How enthusiastic to lie are the models? (00:28:45)

• Does eliminating deception fix our fears about rogue AI? (00:35:39)

• Apollo’s collaboration with OpenAI to stop o3 lying (00:39:02)

• They reduced lying a lot, but the problem is mostly unsolved (00:53:09)

• Detecting situational awareness with thought injections (01:03:28)

• Chains of thought becoming less human understandable (01:17:39)

• Why can’t we use LLMs to make realistic test environments? (01:29:46)

• Is the window to address scheming closing? (01:35:44)

• Would anything still work with superintelligent systems? (01:47:50)

• Companies’ incentives and most promising regulation options (01:57:11)

• 'Internal deployment' is a core risk we mostly ignore (02:11:40)

• Catastrophe through chaos (02:30:46)

• Careers in AI scheming research (02:46:01)

• Marius's key takeaways for listeners (03:04:46)

Video and audio editing: Dominic Armstrong, Milo McGuire, Luke Monsour, and Simon Monsour

Music: CORBIT

Camera operator: Mateo Villanueva Brandt

Coordination, transcripts, and web: Katy Moore

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке:

![Что ошибочно пишут в книгах об ИИ [Двойной спуск]](https://imager.clipsaver.ru/z64a7USuGX0/max.jpg)