Why GPT Hits a Memory Wall

Автор: ML Guy

Загружено: 2026-02-01

Просмотров: 93

Описание:

Large Language Models were never meant to read entire books, and yet today, they can.

So how do modern LLMs reason over tens or even hundreds of thousands of tokens without running out of memory?

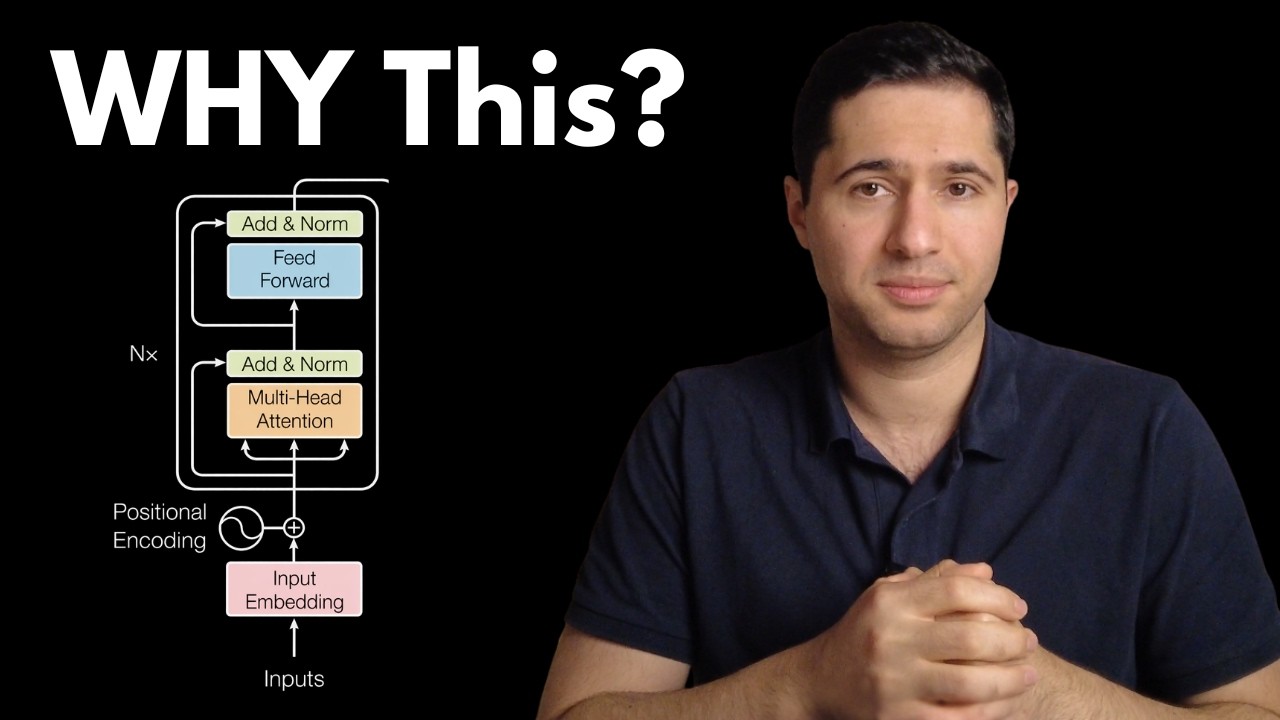

In this video, we dive into Infini-Attention, the architectural shift that allows Transformers to scale beyond fixed context windows. You’ll see why traditional self-attention breaks down at long lengths, why KV Cache alone is not enough, and how modern models rethink attention as memory management rather than brute-force comparison.

We cover:

Why self-attention scales quadratically and hits a hard wall

The limits of KV Cache for very long sequences

How Infini-Attention treats context as a stream, not a matrix

Memory compression, summarization, and trainable memory slots

How models decide what to remember and what to forget

Why RoPE is essential for long-context generalization

How Infini-Attention enables book-length reasoning and persistent conversations

This is not a single trick or a magic formula. It’s a change in how attention itself is designed.

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: