The Core Building Block Behind GPT (Explained Visually)

Автор: ML Guy

Загружено: 2025-12-21

Просмотров: 293

Описание:

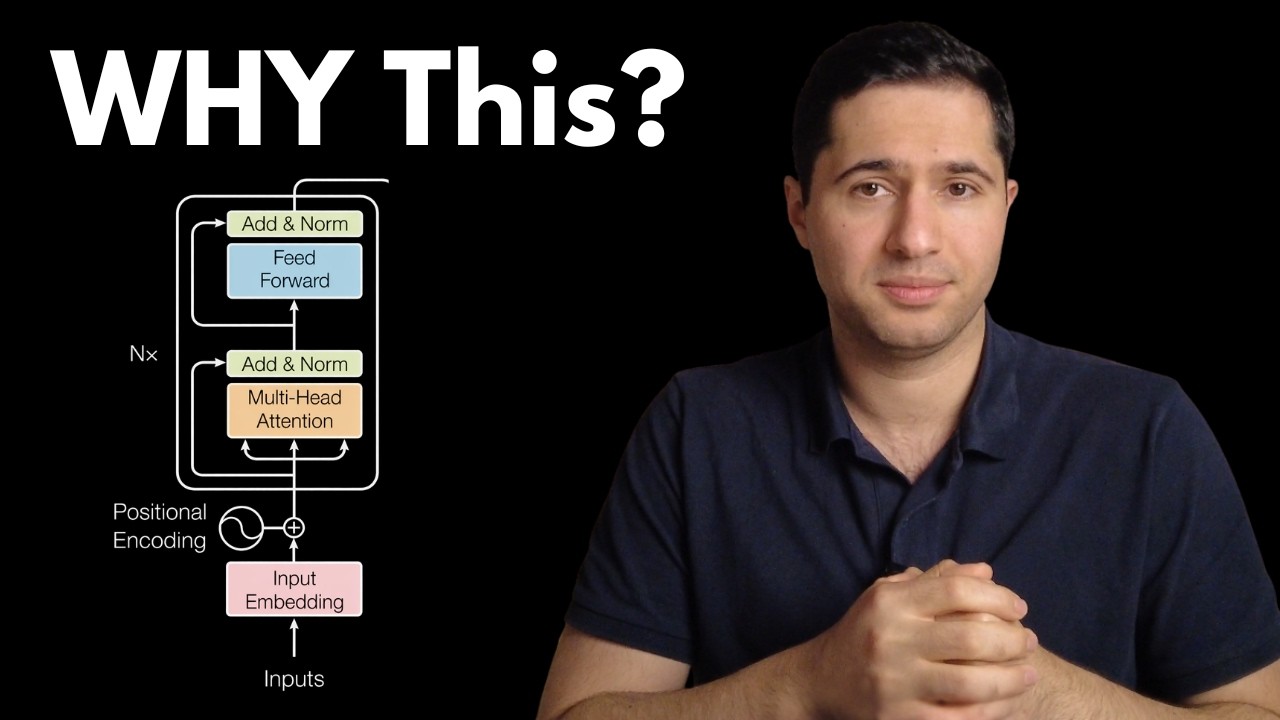

Every modern large language model, GPT, LLaMA, Mistral, and others, is built by stacking the same fundamental unit: the Transformer block.

In this video, we break down exactly what happens inside a single Transformer block, step by step, and explain how its components work together to turn token embeddings into contextual representations.

We cover the three core building blocks of the architecture:

Multi-Head Self-Attention: how tokens exchange information.

Feed-Forward Networks (FFN): how features are transformed independently per token.

Residual Connections and Layer Normalization: why deep Transformers are stable and trainable.

Rather than treating the Transformer as a black box, this video explains the data flow, equations, and design choices that make the architecture scalable and effective.

Topics covered:

Input and output shapes inside a Transformer block

Where attention fits in the computation pipeline

Why residual connections are necessary for deep models

How LayerNorm stabilizes training

How stacking blocks leads to emergent reasoning behavior

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: