Spring Boot + Ollama | Run Local LLM with Spring AI

Автор: Code2CloudX

Загружено: 2026-03-05

Просмотров: 86

Описание:

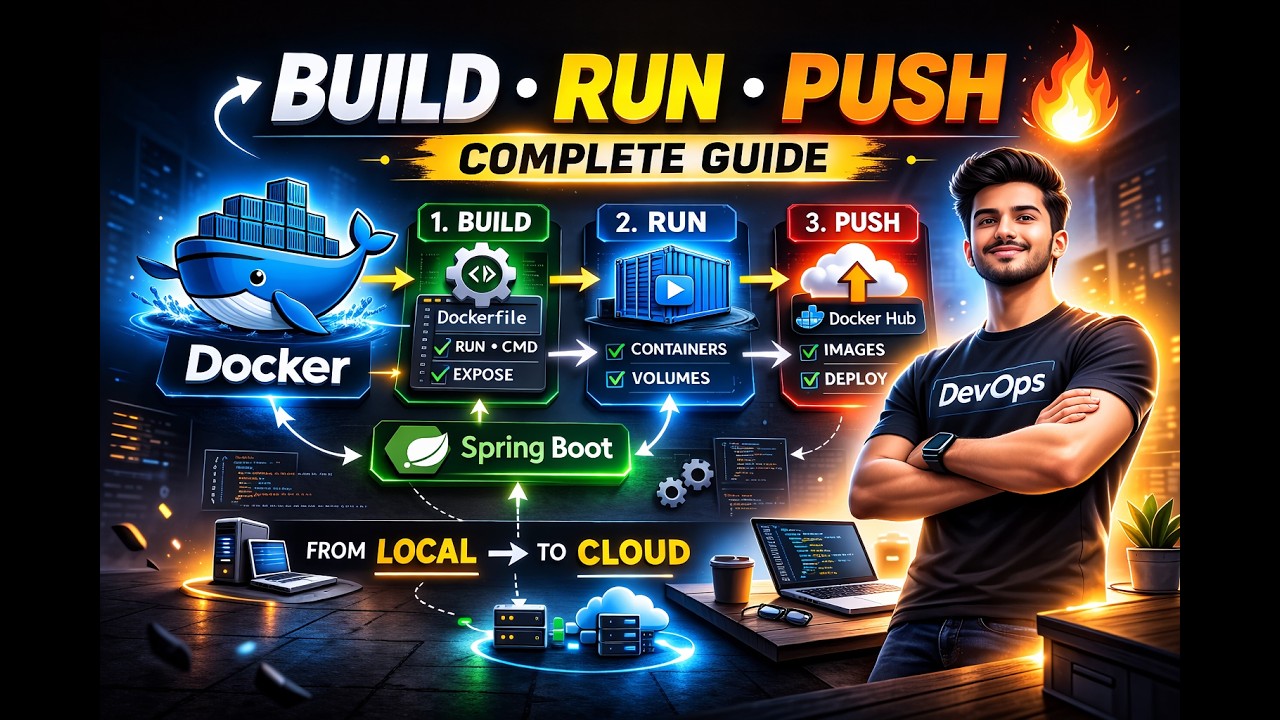

In this video we learn how to run a Large Language Model (LLM) locally using Ollama and integrate it with a Spring Boot application using Spring AI.

In the previous video we connected Spring Boot with OpenAI. In this tutorial we explore how enterprises can run open-source LLM models locally instead of using cloud APIs.

We will cover:

• What is Ollama

• Running open-source LLM models locally

• Downloading and running Llama model

• Replacing OpenAI dependency with Ollama in Spring AI

• Configuring Spring Boot application.properties

• Calling the local LLM from a Spring Boot REST API

• Testing the API using Postman

By the end of this tutorial, your Spring Boot application will be able to communicate with a locally running LLM model.

This approach is very useful for organizations that want to keep their data inside their own infrastructure.

Technologies used:

Spring Boot

Spring AI

Ollama

Llama Model

Java

REST APIs

This video is part of the "Java Developer to AI Engineer" series.

Subscribe for more tutorials on:

Spring Boot

Microservices

Kafka

DevOps

Cloud

AI integration with Java

#SpringAI

#SpringBoot

#Ollama

#LLM

#JavaAI

#LocalAI

#Code2CloudX

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: