Tutorial-44:RMSProp optimizer explained in detail | Simplified | Deep Learning

Автор: Algorithm Avenue

Загружено: 2025-09-16

Просмотров: 293

Описание:

Connect with us on Social Media!

📸 Instagram: https://www.instagram.com/algorithm_a...

🧵 Threads: https://www.threads.net/@algorithm_av...

📘 Facebook: / algorithmavenue7

🎮 Discord: / discord

In this video, we dive deep into RMSProp (Root Mean Square Propagation) — one of the most popular optimization algorithms in deep learning. 🚀

You’ll learn:

✅ Why RMSProp was introduced (to fix AdaGrad’s diminishing learning rate problem)

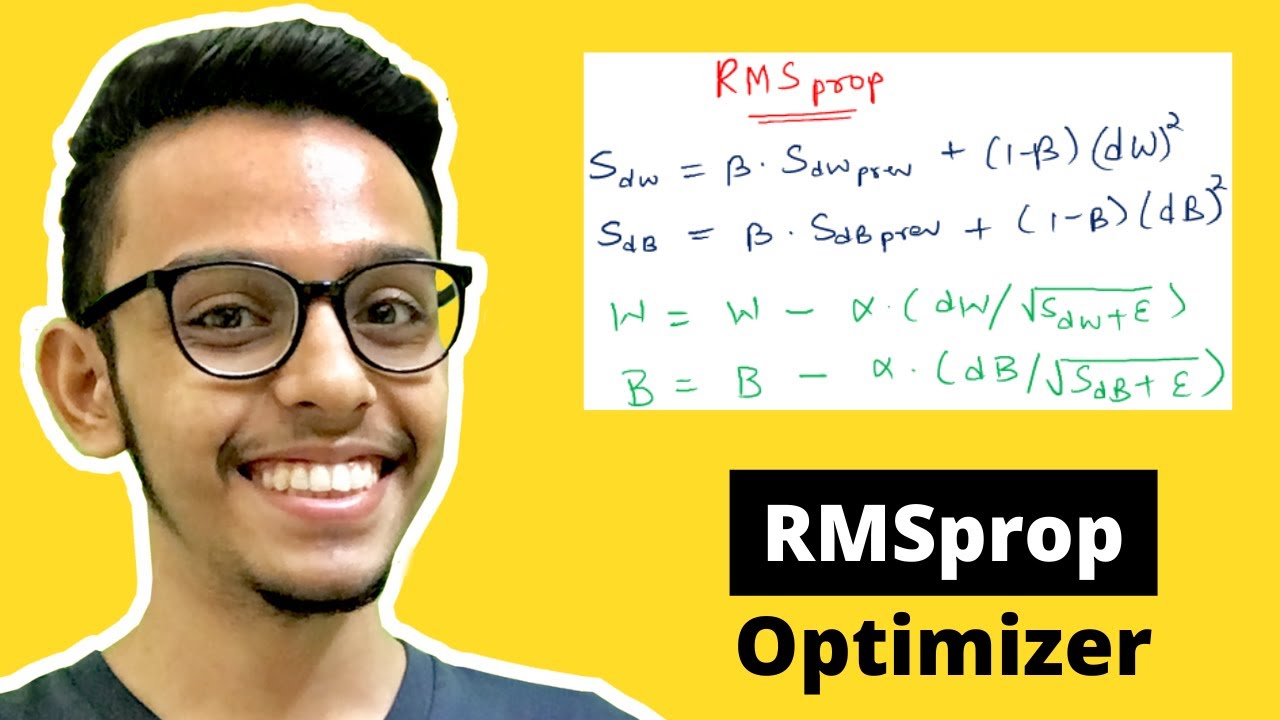

✅ The update rule and how it works step by step

✅ Key hyperparameters like lr, alpha, eps, momentum, and centered explained in simple terms

✅ Comparison with AdaGrad and Adam

✅ Practical intuition on when to use RMSProp (especially for RNNs and non-stationary objectives)

✅ A PyTorch implementation example on sparse data

Whether you’re a beginner in machine learning or looking to strengthen your deep learning fundamentals, this tutorial will give you a clear and practical understanding of RMSProp.

👉 If you found this useful, don’t forget to Like , Share , and Subscribe for more awesome content!

#RMSProp #RMSPropOptimizer #DeepLearning #MachineLearning #NeuralNetworks #AI #ArtificialIntelligence #GradientDescent #AdaptiveOptimizers #OptimizationAlgorithm #PyTorch #TensorFlow #Keras #LearnAI #MLforBeginners #DeepLearningForBeginners #DataScience #AIExplained #MachineLearningTutorial #DeepLearningTutorial #Backpropagation #OptimizersInDeepLearning #SGD #AdaGrad #AdamOptimizer #ComputerVision #NLP #AIResearch #AICommunity #MLEngineer

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: