Lecture 12: The entire Data Preprocessing Pipeline of Large Language Models (LLMs)

Автор: Vizuara

Загружено: 2024-09-17

Просмотров: 41579

Описание:

In this lecture, we learn about the entire data processing pipeline of Large Language Models (LLMs).

In particular, we look at 4 aspects:

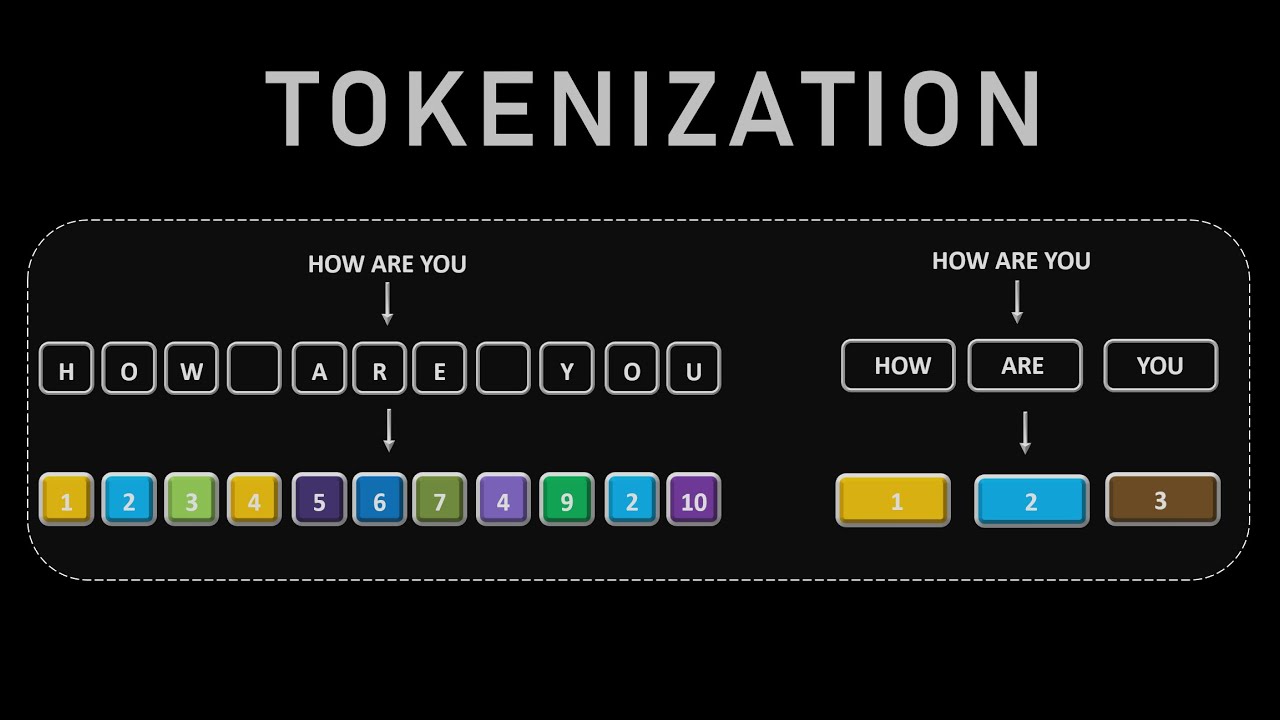

(1) Tokenization: Word based, Subword based (BPE tokenizer), Character based

(2) Token embeddings

(3) Positional embeddings

(4) Input embeddings = Token embeddings + Positional embeddings

The key reference book which this video series very closely follows is Build a Large Language Model from Scratch by Manning Publications. All schematics and their descriptions are borrowed from this incredible book!

This book serves as a comprehensive guide to understanding and building large language models, covering key concepts, techniques, and implementations.

Affiliate links for purchasing the book will be added soon. Stay tuned for updates!

0:00 Lecture agenda

3:55 Word based tokenizer

22:19 Special Context Tokens

29:20 Subword and character tokenizers

36:24 Byte Pair Encoder (BPE)

48:54 Dataloader and input-target pairs

01:03:53 Token embeddings

01:22:46 Positional embeddings

01:30:03 Create final input embeddings

Dataset link:

https://github.com/rasbt/LLMs-from-sc...

Google Colab Code: https://drive.google.com/file/d/1WxQo...

Python regular expression library: https://docs.python.org/3/library/re....

OpenAI Tiktoken library: https://github.com/openai/tiktoken

PyTorch Datasets and Dataloaders: https://pytorch.org/tutorials/beginne...

PyTorch embedding layer: https://pytorch.org/docs/stable/gener...

=================================================

✉️ Join our FREE Newsletter: https://vizuara.ai/our-newsletter/

=================================================

Vizuara philosophy:

As we learn AI/ML/DL the material, we will share thoughts on what is actually useful in industry and what has become irrelevant. We will also share a lot of information on which subject contains open areas of research. Interested students can also start their research journey there.

Students who are confused or stuck in their ML journey, maybe courses and offline videos are not inspiring enough. What might inspire you is if you see someone else learning and implementing machine learning from scratch.

No cost. No hidden charges. Pure old school teaching and learning.

=================================================

🌟 Meet Our Team: 🌟

🎓 Dr. Raj Dandekar (MIT PhD, IIT Madras department topper)

🔗 LinkedIn: / raj-abhijit-dandekar-67a33118a

🎓 Dr. Rajat Dandekar (Purdue PhD, IIT Madras department gold medalist)

🔗 LinkedIn: / rajat-dandekar-901324b1

🎓 Dr. Sreedath Panat (MIT PhD, IIT Madras department gold medalist)

🔗 LinkedIn: / sreedath-panat-8a03b69a

🎓 Sahil Pocker (Machine Learning Engineer at Vizuara)

🔗 LinkedIn: / sahil-p-a7a30a8b

🎓 Abhijeet Singh (Software Developer at Vizuara, GSOC 24, SOB 23)

🔗 LinkedIn: / abhijeet-singh-9a1881192

🎓 Sourav Jana (Software Developer at Vizuara)

🔗 LinkedIn: / souravjana131

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: