Agreement and Alignment for Human-AI Collaborative Decision Making

Автор: Simons Institute for the Theory of Computing

Загружено: 2026-01-13

Просмотров: 352

Описание:

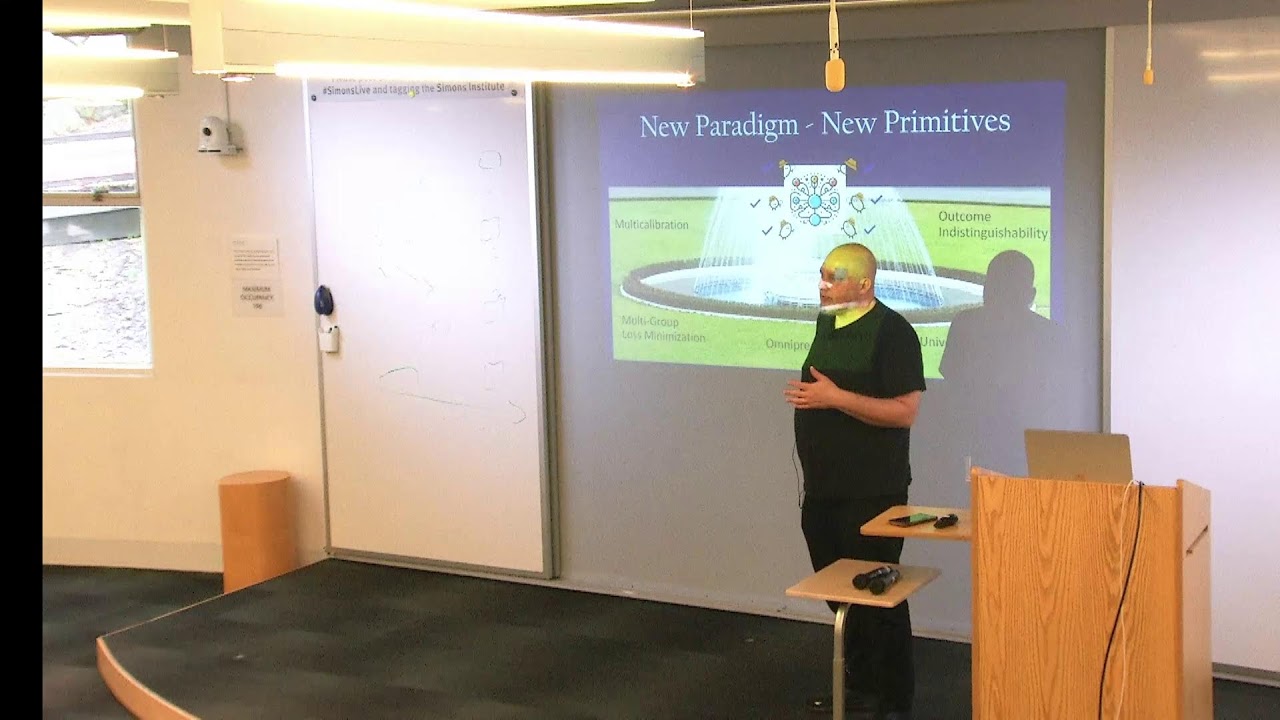

Aaron Roth (University of Pennsylvania)

https://simons.berkeley.edu/talks/aar...

Bridging Prediction and Intervention Problems in Social Systems

As AI models become increasingly powerful, it is an attractive proposition to use them in important decision making pipelines, in collaboration with human decision makers. But how should a human being and a machine learning model collaborate to reach decisions that are better than either of them could achieve on their own? If the human and the AI model were perfect Bayesians, operating in a setting with a commonly known and correctly specified prior, Aumann’s classical agreement theorem would give us one answer: they could engage in conversation about the task at hand, and their conversation would be guaranteed to converge to (accuracy-improving) agreement. This classical result however would require making many implausible assumptions, both about the knowledge and computational power of both parties. We show how to recover similar (and more general) results using only computationally and statistically tractable assumptions, which substantially relax full Bayesian rationality. In the second part of the talk, we go on to consider a more difficult problem: that the AI model might be acting at least in part to advance the interests of its designer, rather than the interests of its user, which might be in tension. We show how market competition between different AI providers can mitigate this problem assuming only a mild “market alignment” assumption — that the user’s utility function lies in the convex hull of the AI providers utility functions — even when no single provider is well aligned. In particular, we show that in all Nash equilibria of the AI providers under this market alignment condition, the user is able to advance her own goals as well as she could have in collaboration with a perfectly aligned AI model.

This talk describes the results of three papers, which are joint works with Natalie Collina, Ira Globus-Harris, Surbhi Goel, Varun Gupta, Emily Ryu, and Mirah Shi:

Tractable Agreement Protocols: https://arxiv.org/abs/2411.19791 (STOC 2025)

Collaborative Prediction: Tractable Information Aggregation via

Agreement: https://arxiv.org/abs/2504.06075 (SODA 2026)

Emergent Alignment from Competition: https://arxiv.org/abs/2509.15090

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: