PART 4: Spark Runtime and Architecture | Apache Spark Code to Cluster | Explain Like you are 5

Автор: JPdemy

Загружено: 2026-02-26

Просмотров: 12

Описание:

🚀 Physical Spark Hierarchy Explained | Driver Node | Worker Nodes | DAG | Catalyst Optimizer | Spark Runtime Architecture

Notes: https://drive.google.com/drive/folder...

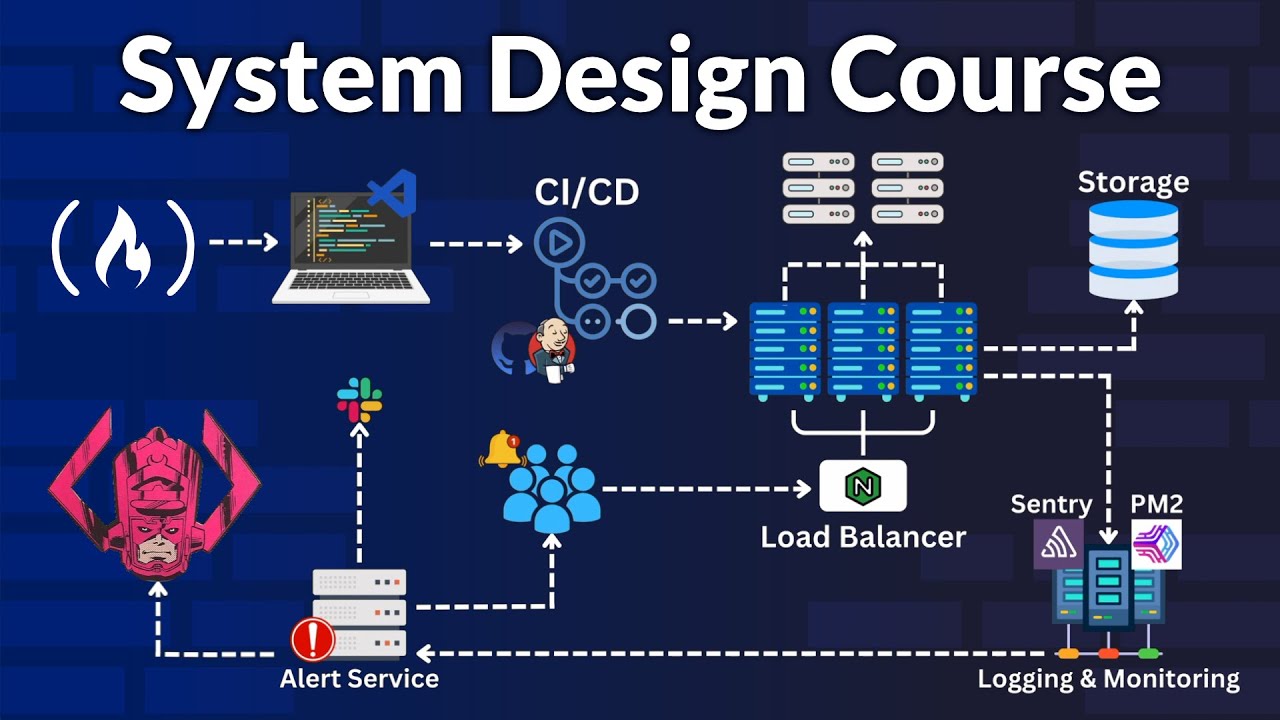

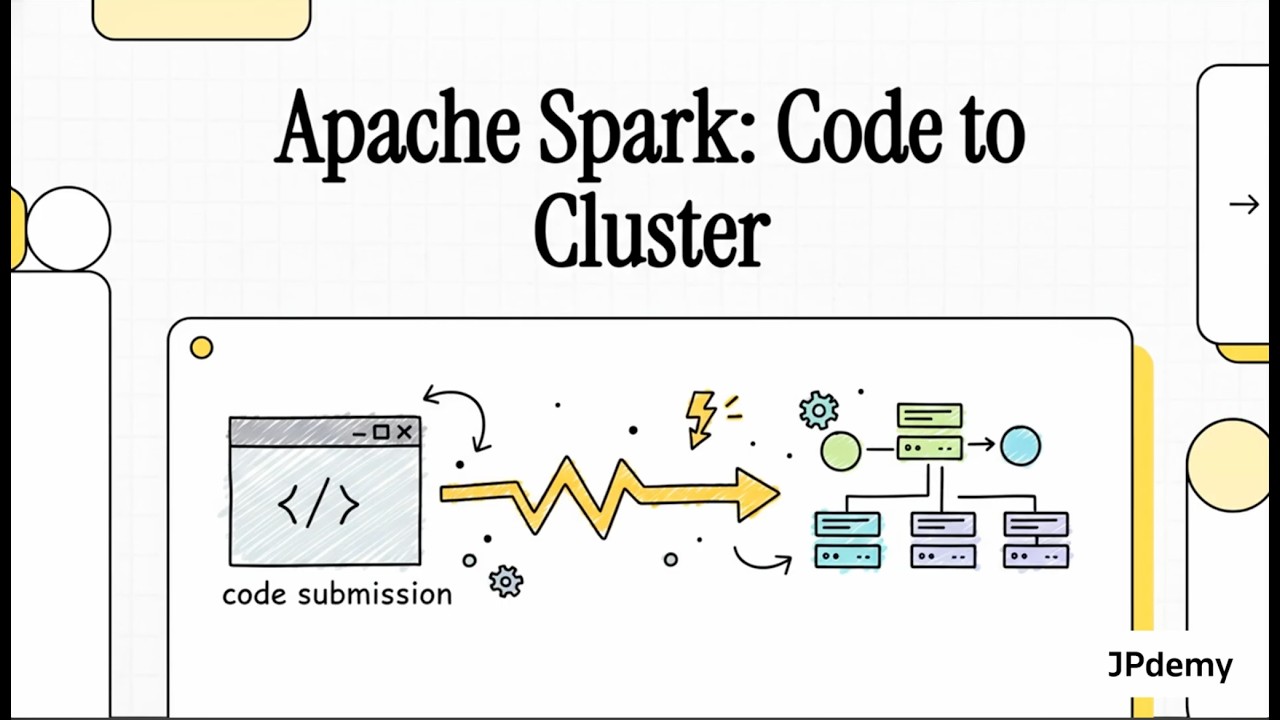

In this video, we break down the complete Physical Spark Hierarchy and explain how Apache Spark works internally — step by step.

If you are learning Apache Spark, PySpark, Big Data, or preparing for Data Engineering interviews, this video will give you deep conceptual clarity about how Spark actually runs behind the scenes.

In this session, you will understand:

✔ What is a Spark Cluster

✔ Driver Node Architecture

✔ Worker Node Structure

✔ Slots and Parallel Task Execution

✔ Spark DAG Scheduler & Task Scheduler

✔ JVM Requirement in Spark

✔ Spark Runtime Architecture

✔ Lazy vs Eager Evaluation

✔ Narrow vs Wide Transformations

✔ Shuffle Operations Explained

✔ Catalyst Optimizer Deep Overview

✔ Logical Plan → Physical Plan → Cost Model

✔ Task Distribution and Cluster Resource Negotiation

✔ Data Skew & Performance Bottlenecks

✔ Spark UI for Monitoring Jobs and Stages

We also discuss how Photon Engine (Delta Engine) improves Spark performance using vectorized execution and CPU-level optimizations.

This video is ideal for:

Data Engineering aspirants

Spark beginners and intermediate learners

Hadoop to Spark transition learners

Big Data developers

Software engineers entering distributed systems

Understanding Spark architecture deeply helps you:

Write optimized Spark jobs

Reduce shuffle

Handle data skew

Clear interviews confidently

Debug performance issues using Spark UI

If you're serious about becoming a Data Engineer, this is foundational knowledge you must master.

Subscribe for more content on:

Apache Spark, PySpark, Airflow, BigQuery, Delta Lake, Distributed Systems and Data Engineering 🚀

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: