The 0.9B OCR Model That Beats Gemini (GLM-OCR) | Benchmarks + Demo | Live Coding + Q&A (Mar 19th)

Автор: Roboflow

Загружено: 2026-03-20

Просмотров: 1180

Описание:

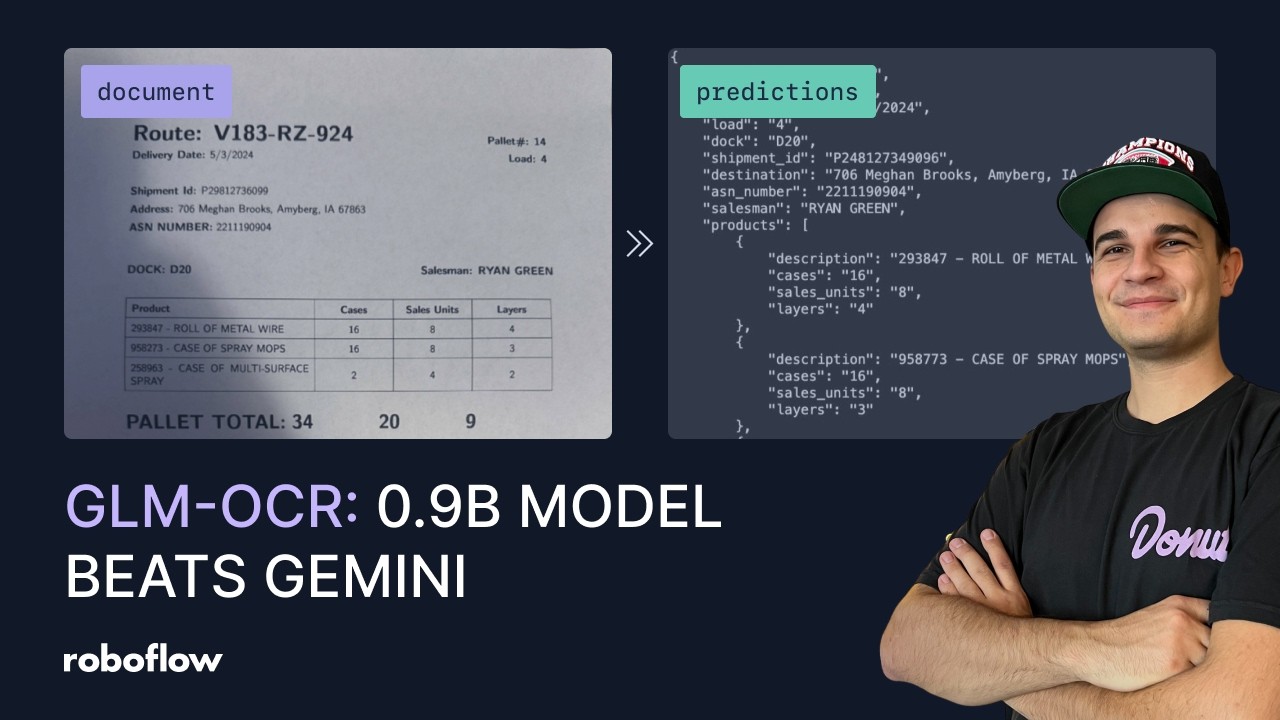

GLM-OCR packs just 0.9B parameters — a 0.4B CogViT visual encoder and a 0.5B GLM language decoder — yet it tops OmniDocBench V1.5 at 94.62, approaching Gemini-level performance. A Multi-Token Prediction mechanism lets it decode multiple tokens per step, keeping latency low enough for edge deployment and production workloads.

In this stream I first benchmark GLM-OCR across 8 diverse datasets — captchas, LaTeX equations, receipts, date stamps, jersey numbers, container serials, tire codes, and license plates — to test its limits on real-world images. Then I build a complete smart parking management system that chains license plate detection, OC-SORT multi-object tracking, and GLM-OCR into a pipeline that reads plates automatically as vehicles enter a lot. Both Colab notebooks are linked below so you can follow along.

Resources:

📓 How to Perform OCR with GLM-OCR: https://colab.research.google.com/git...

📓 Smart Parking Management with GLM-OCR: https://colab.research.google.com/git...

📄 GLM-OCR Paper: https://arxiv.org/abs/2603.10910

🤗 GLM-OCR on HuggingFace: https://huggingface.co/zai-org/GLM-OCR

Stay updated with the projects I'm working on at https://github.com/roboflow and https://github.com/SkalskiP! ⭐

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке:

![Best of Deep House [2026] | Melodic House & Progressive Flow](https://imager.clipsaver.ru/Il-ZpBuC8tA/max.jpg)

![Как представить 10 измерений? [3Blue1Brown]](https://imager.clipsaver.ru/tCIARwH01Ac/max.jpg)