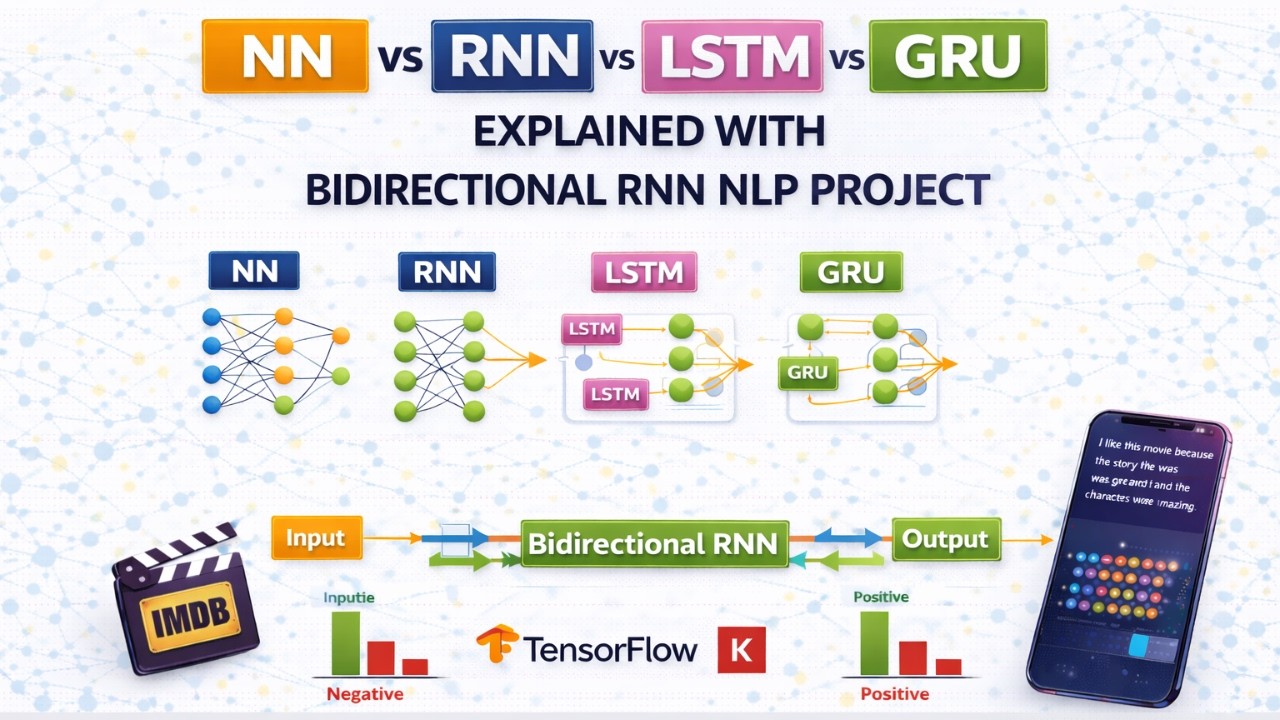

ANN vs RNN vs LSTM vs GRU Explained with Bidirectional RNN NLP Project

Автор: Switch 2 AI

Загружено: 2026-03-03

Просмотров: 1

Описание:

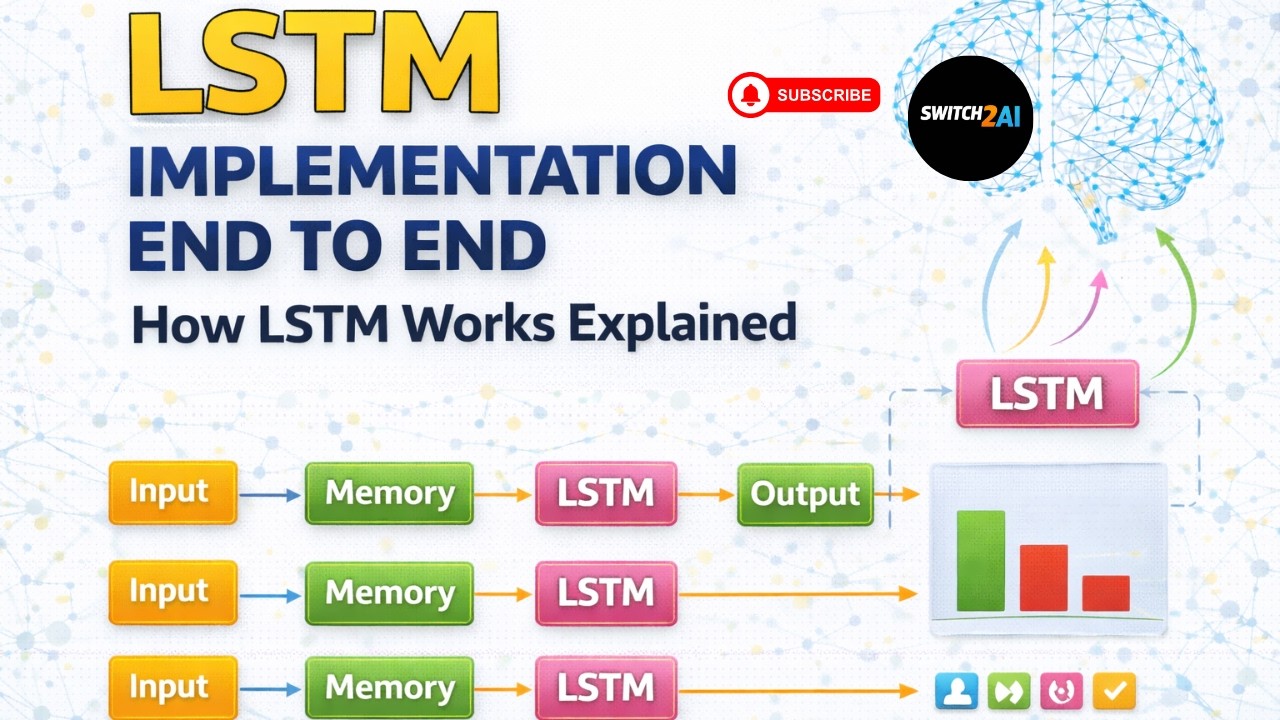

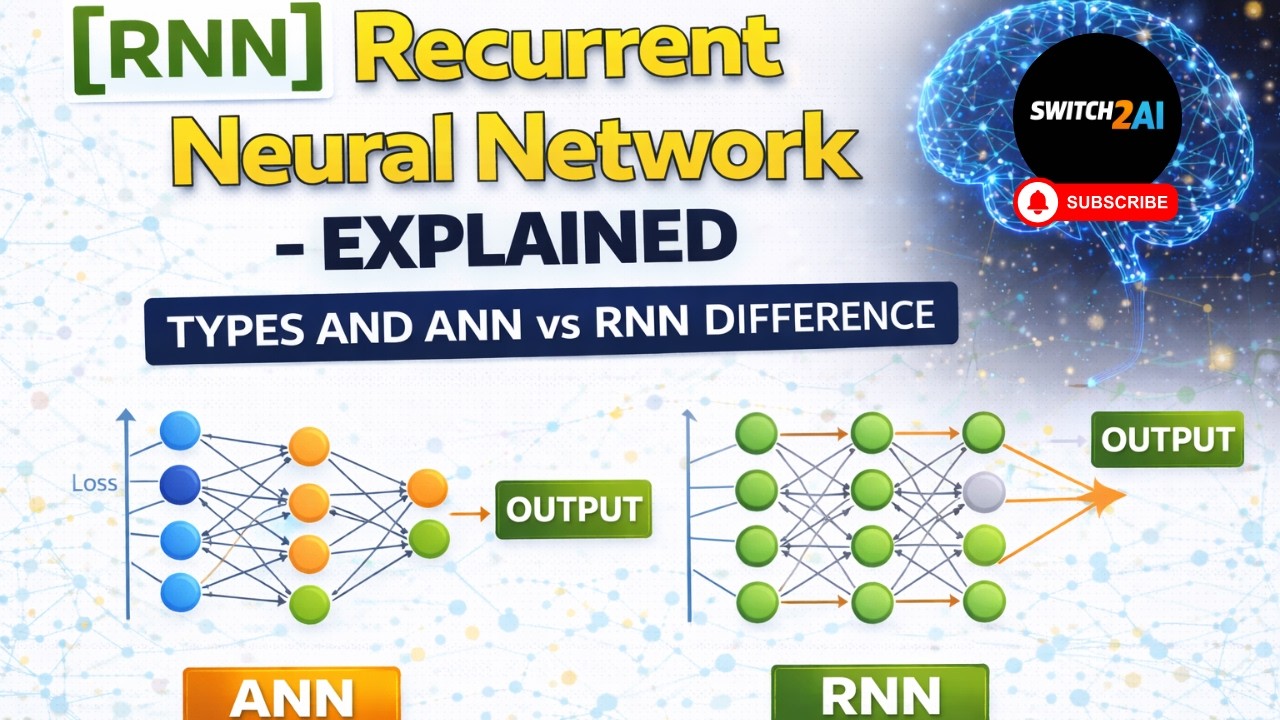

In this video, we clearly understand the evolution of neural networks for sequential data — starting from ANN to RNN, LSTM, GRU and finally Bi-Directional architectures. We also discuss how these models are used in real-world NLP projects and how industry pipelines are structured.

Here is the GitHub repo link:

https://github.com/switch2ai

You can download all the code, scripts, and documents from the above GitHub repository.

We begin with Artificial Neural Networks (ANN) and understand why they struggle with sequential text data. ANN cannot maintain the order of words in a sentence and requires fixed input and output sizes, which makes it unsuitable for many NLP problems.

Next step is Exploratory Data Analysis (EDA) and text preprocessing. This includes text cleaning, tokenization, and text normalization.

Then text representation techniques are applied to convert text into numerical vectors that machine learning models can process.

After that we select appropriate models such as RNN, LSTM, GRU, or Transformer-based architectures and train them on the dataset.

Next step is model evaluation where we define evaluation metrics and measure model performance.

Once the model performs well, it moves to deployment stage where models are exposed through APIs using frameworks like FastAPI, containerized using Docker, and deployed on cloud platforms.

Finally, the system enters monitoring and maintenance stage where model performance is tracked, errors are analyzed, and models are retrained periodically.

In the practical example discussed in this video, we work on a complaint classification system where customer complaints must be automatically routed to the correct department. The dataset contains consumer complaints and product categories. The goal of the model is to classify each complaint into the appropriate department such as Loan, Card, Credit Report, Services, or Others.

We perform exploratory analysis, remove unnecessary columns, handle missing values, and merge related categories after consulting domain experts. This helps reduce class imbalance and improves classification performance.

This end-to-end explanation helps learners understand both the theoretical concepts of sequence models and the real-world workflow followed by machine learning engineers in industry.

This video is perfect for NLP learners, machine learning engineers, AI interview preparation, and anyone interested in understanding real-world deep learning pipelines.

Channel Name: Switch 2 AI

#NeuralNetworks

#RNN

#LSTM

#GRU

#BidirectionalRNN

#DeepLearning

#NLP

#MachineLearning

#AIProject

#Switch2AI

ANN vs RNN vs LSTM vs GRU

recurrent neural network tutorial

LSTM vs GRU difference

bidirectional RNN explained

sequence models deep learning

NLP neural networks explained

RNN limitations explained

GRU architecture explained

LSTM architecture explained

deep learning for NLP

NLP project lifecycle

machine learning project pipeline

AI complaint classification project

text classification NLP

Switch 2 AI

ANN vs RNN vs LSTM vs GRU,recurrent neural network tutorial,LSTM vs GRU difference,bidirectional RNN explained,sequence models deep learning,NLP neural networks explained,RNN limitations explained,GRU architecture explained,LSTM architecture explained,deep learning for NLP,NLP project lifecycle,machine learning project pipeline,AI complaint classification project,text classification NLP,Switch 2 AI,sequence modeling deep learning

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке:

![Best of Deep House [2026] | Melodic House & Progressive Flow](https://imager.clipsaver.ru/Il-ZpBuC8tA/max.jpg)