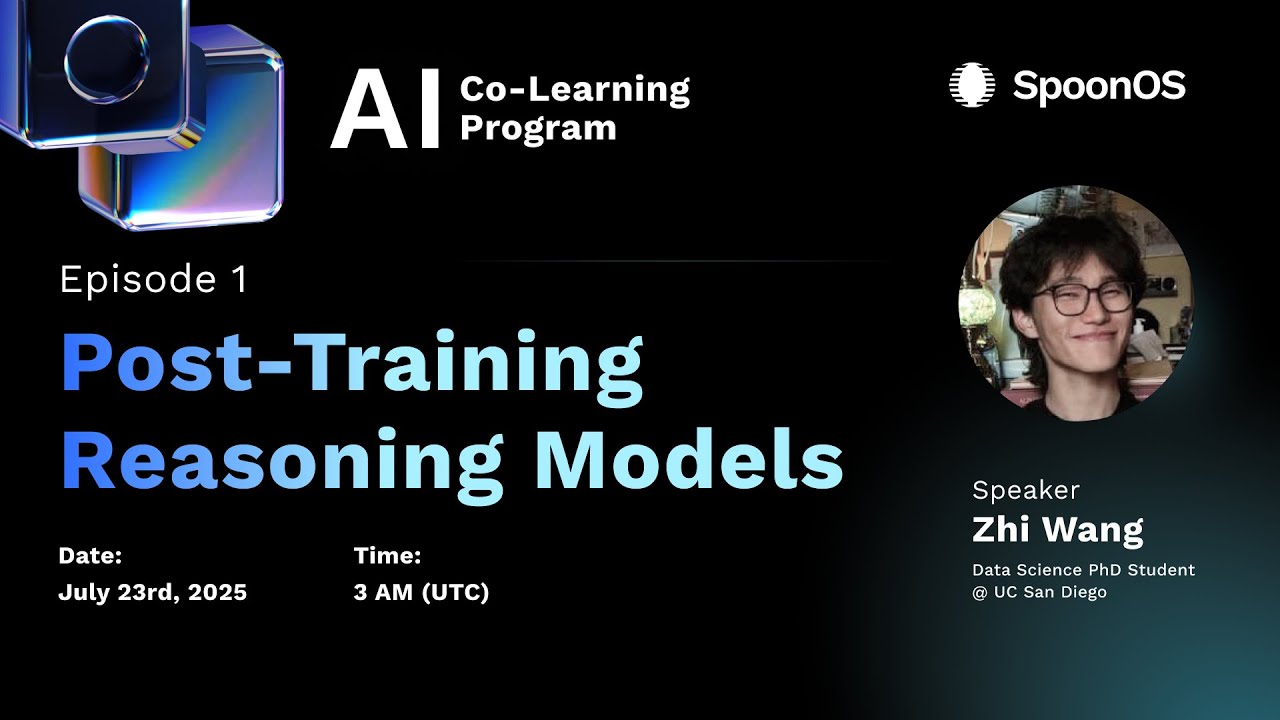

Post Training Reasoning Models

Автор: SpoonOS

Загружено: 2025-07-22

Просмотров: 228

Описание:

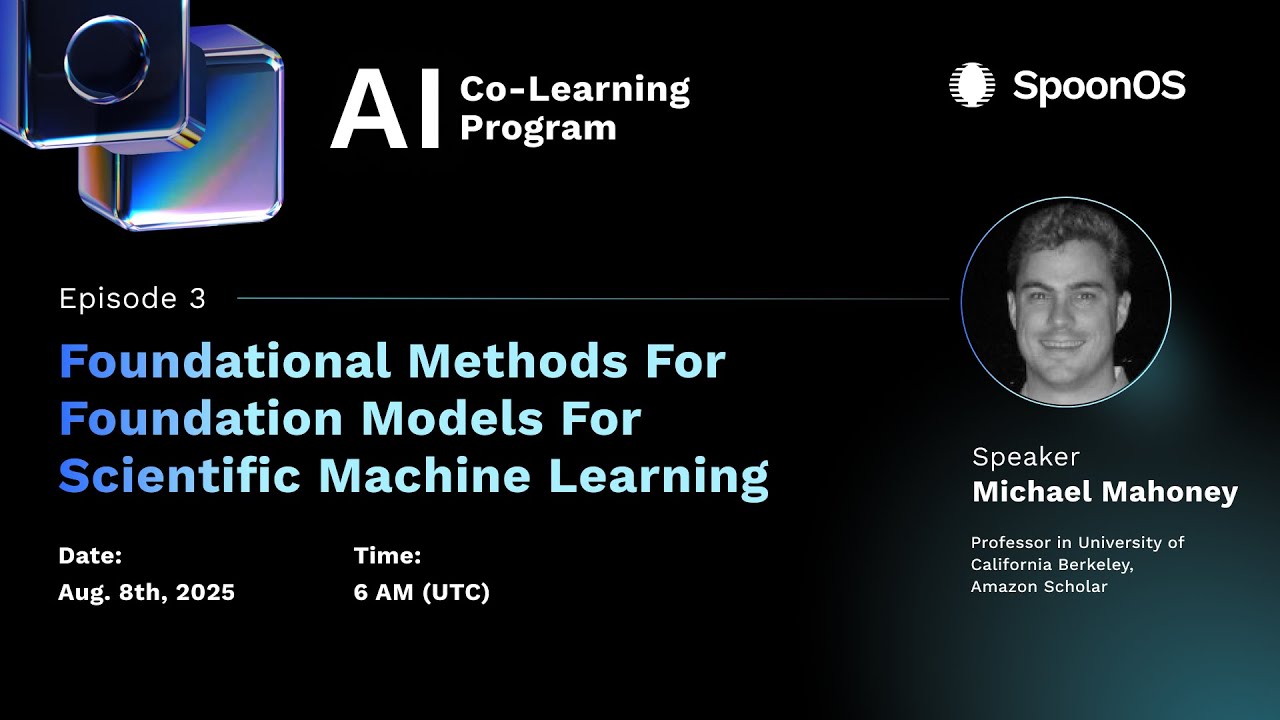

Post-Training Reasoning Models: How LLMs Learn to Think and Act

Basics

CoT:Chain-of-Thought

ToT:Tree-of-Thought

SFT:Supervised Fine-Tuning

RL:Reinforcement Learning

RLVR:Reinforcement Learning with Verifiable Rewards

Key Topics:

Motivation for post-training: overcoming scaling limits of pre-training and enabling LLMs to "think"

Introducing temporal reasoning via Chain-of-Thought (CoT) and Tree-of-Thought (ToT)

Supervised Fine-Tuning (SFT) on reasoning data: objectives and benefits

Reinforcement Learning with Verifiable Rewards (RLVR) and GRPO (Group Relative Policy Optimization)

Applications & Insights:

Practical design of reasoning-oriented pipelines for math and code tasks

Techniques to enhance reasoning during inference without retraining

Discussion on current limitations and future research directions in scalable reasoning for LLMs

Open Questions

How can we best integrate the stability of SFT with the optimization

power of RL?

How do we optimize the RL process itself? (e.g., the 80/20 rule, selective

rollouts).

Can we encourage continuous, internal ”thought” processes in LLMs?

(e.g., recurrent blocks, chain of continuous thoughts).

Co-Learning Website: https://xspoonai.github.io/spoon-cole...

Join our Discord server to learn more: discord.gg/XkxHMwGtSC

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: