MLLMs: Solving the Text-to-Pixel Modality Gap

Автор: AI Research Roundup

Загружено: 2026-03-10

Просмотров: 9

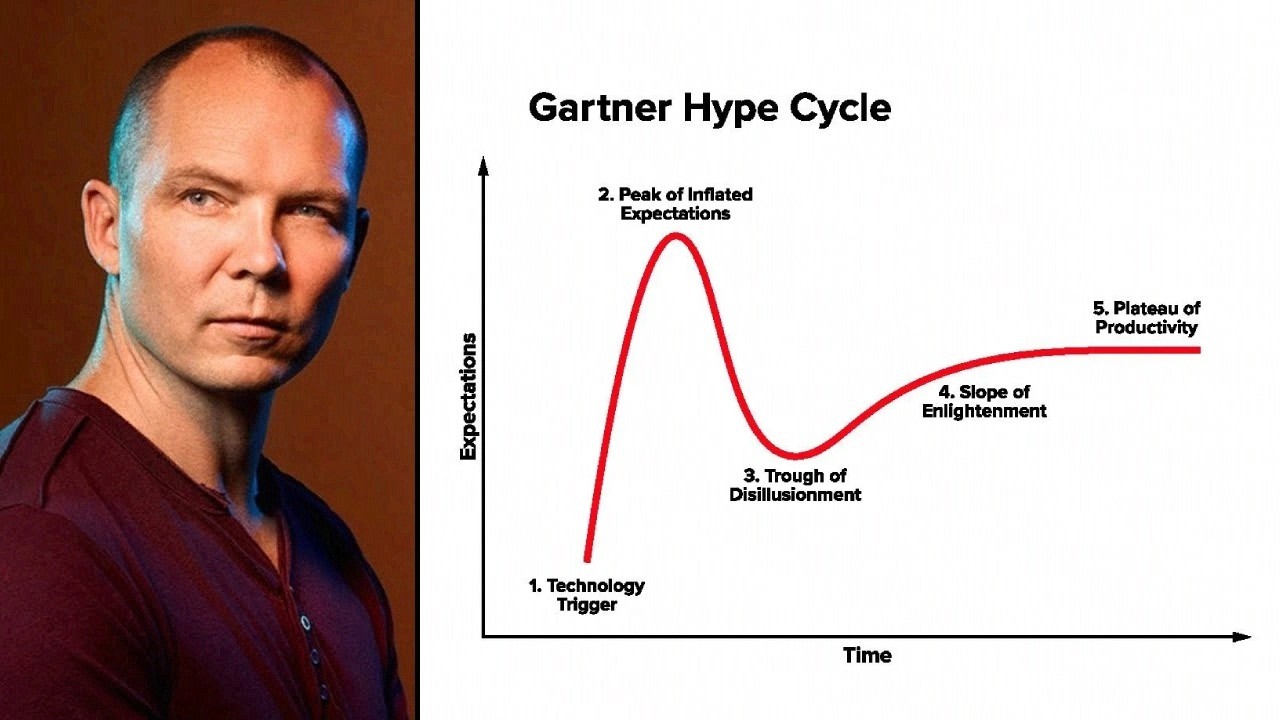

Описание: In this AI Research Roundup episode, Alex discusses the paper: 'Reading, Not Thinking: Understanding and Bridging the Modality Gap When Text Becomes Pixels in Multimodal LLMs' This study investigates the modality gap where Multimodal Large Language Models perform worse when processing text as images compared to abstract tokens. Researchers evaluated seven major models, including Qwen2.5-VL and GPT-5.2, across benchmarks involving synthetic and realistic document images. The findings show that while models struggle significantly with synthetic math tasks in pixel form, they often excel at reading natural document images. The research includes a grounded-theory error analysis of over 4,000 examples to identify failure points like rendering resolution and font. Ultimately, the paper provides a framework for understanding and bridging the gap between visual perception and textual reasoning. Paper URL: https://arxiv.org/abs/2603.09095 #AI #MachineLearning #DeepLearning #MultimodalLLM #VisionLanguageModels #OCR #ModalityGap

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: