Deploying AI Runtimes

Автор: ecosystem Ai

Загружено: 2026-02-27

Просмотров: 31

Описание:

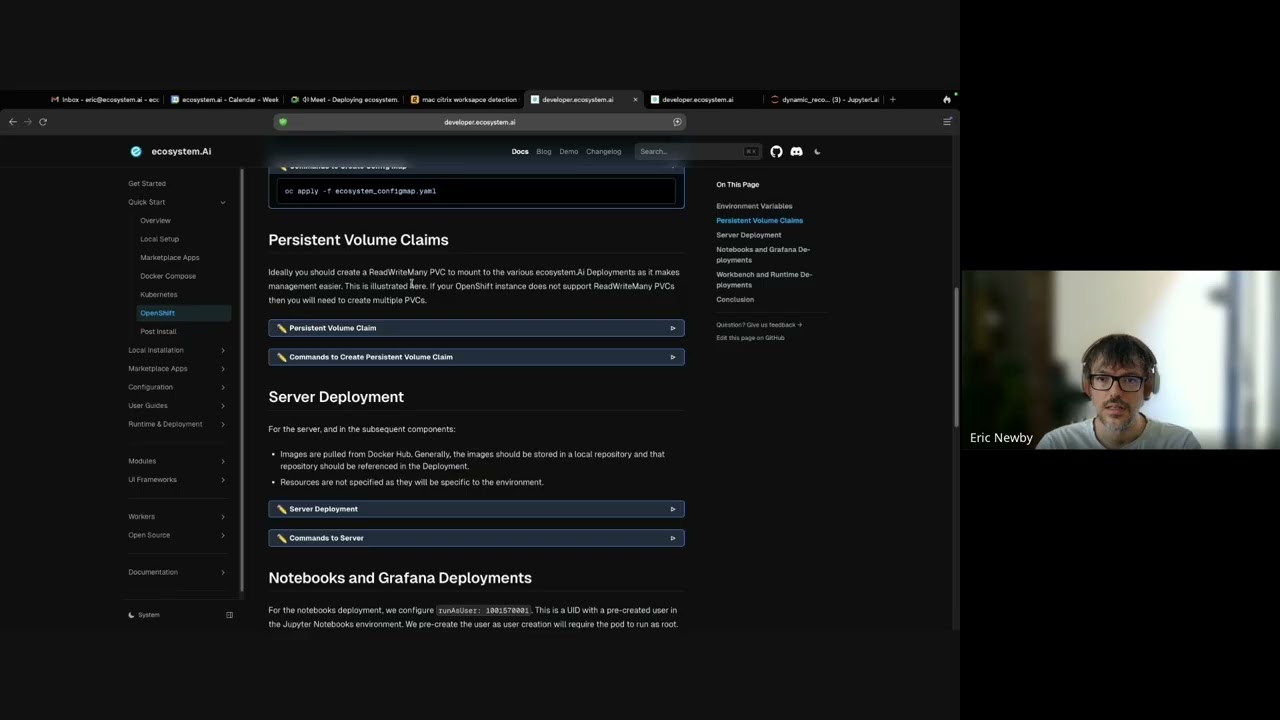

Eric explains how to deploy the Ecosystem AI runtime into production with a focus on Kubernetes/OpenShift, covering three deployment patterns: permanently running runtime pods that accept pushed configuration updates (optionally compiling custom Java pre/post-scoring logic or reward functions), a recommended setup adding a separate Runtime MCP container to expose MCP protocol tools for agents/LLMs, enable MLflow model integration, and support custom Python APIs, and a less agile approach using versioned images with embedded configuration for strict rollback needs.

Demos Python notebook automation for dynamic and static model deployments, including authentication, syncing and compiling code, optional Cassandra config and API config updates, pushing configuration, and testing calls, and discusses scaling considerations and using managed LLM services like Amazon Bedrock rather than running LLMs inside Kubernetes.

00:54 Deployment Patterns Overview

02:54 Always-On Runtime Pods

04:57 Custom Java Logic Builds

07:03 Runtime MCP Benefits

10:19 Versioned Image Deployments

13:02 Demo Setup Notebooks

13:15 Dynamic Model Push Workflow

19:17 Static Models with MLflow

21:52 Scaling and LLM Integration Q&A

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: