Why AI Makes Up Facts: The 'Confidentally Wrong' Problem Explained

Автор: SudeeptaAIproducts

Загружено: 2025-11-11

Просмотров: 26

Описание:

AI can sound so confident, even when it’s completely wrong. 😅

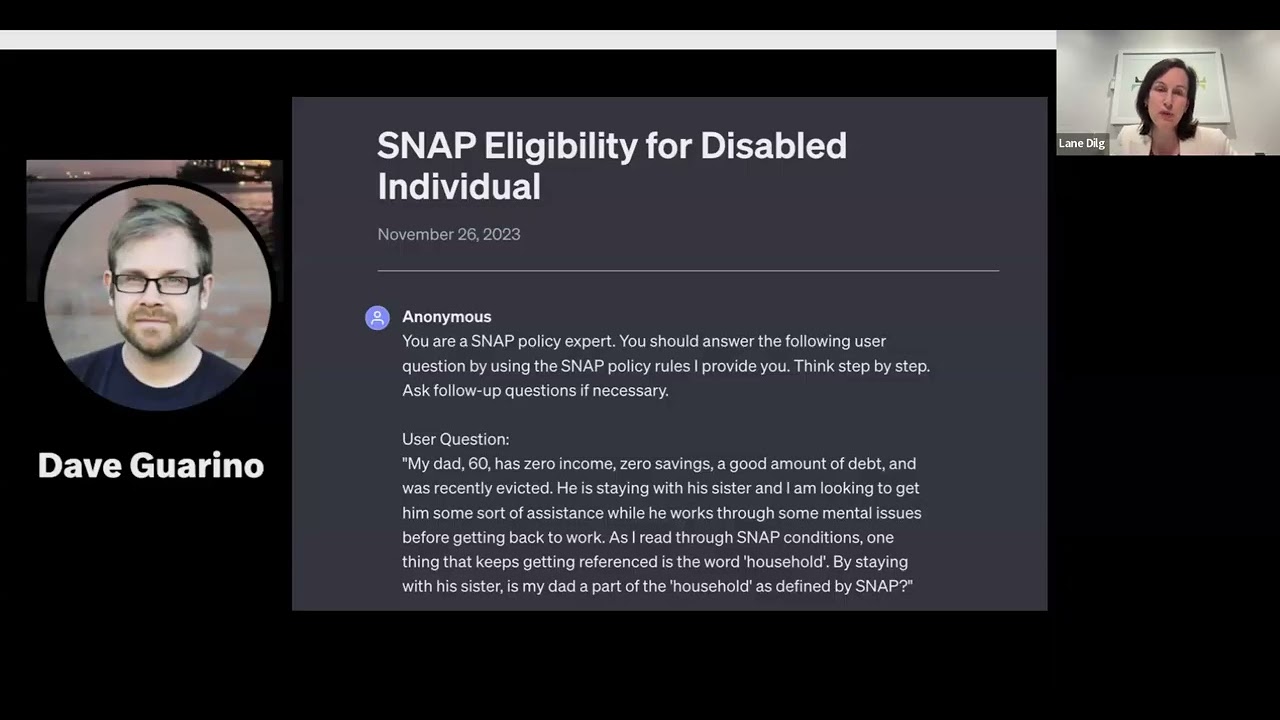

In this video, I break down why large language models like ChatGPT sometimes make things up — a phenomenon called hallucination.

You’ll learn:

🧠 What “hallucination” means in AI

🔍 How language models are trained and why they predict words, not truth

📉 Real example of hallucination that costed a corporate giant

⚙️ How hallucinations happen and ways to reduce them

💬 Whether it’s actually possible to eliminate hallucinations

Because sometimes, AI isn’t lying — it’s just confidently wrong.

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: