07. Adversarial Machine Learning

Автор: SprintML-Lab

Загружено: 2024-05-05

Просмотров: 507

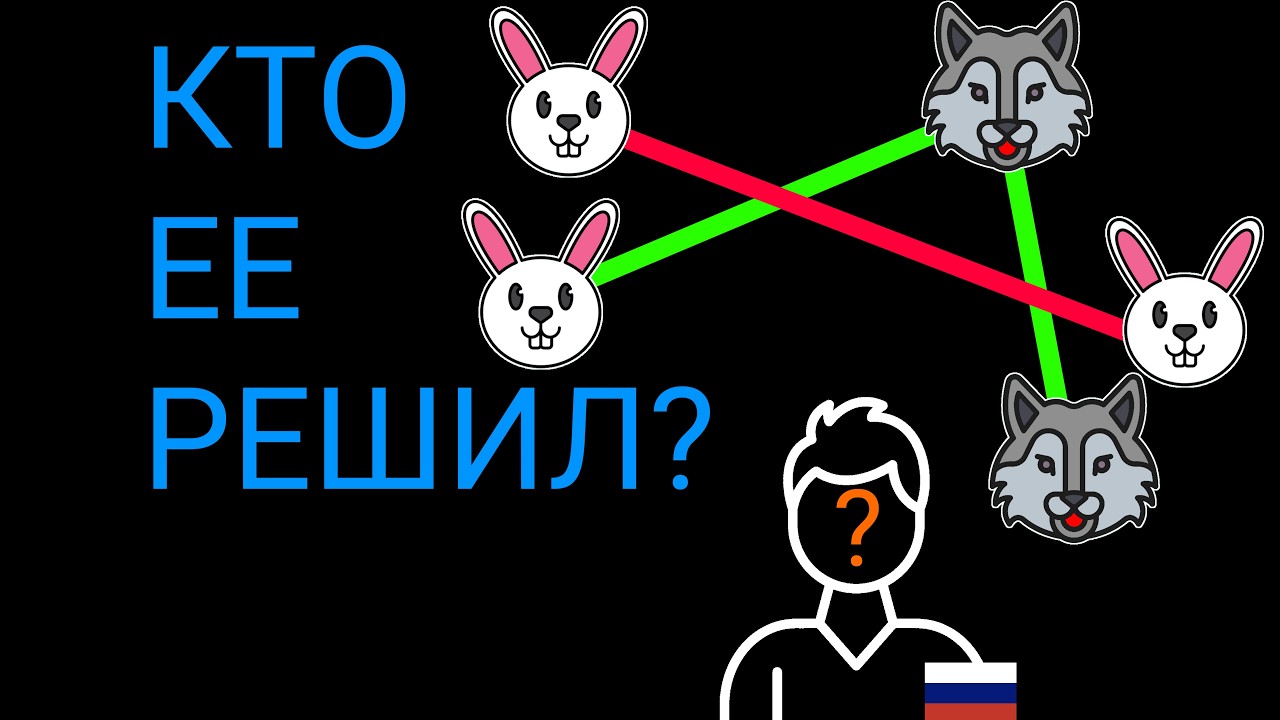

Описание: We first discuss the mismatch between the train and inference time distributions. We explain that an adversary might present to the model the worst-case distribution shift. In the broadest sense, these worst cases are adversarial perturbations, which we define as small imperceptible changes to the input that alter model predictions. These attacks can be divided according to the threat model into the white-box and black-box attacks. We cover both types in this lecture. The white box attacks have access to the model architecture, parameters, and gradients. The gradient is used to find the direction to manipulate the input so that the loss for the correct class y is increased and the input is misclassified. We discuss the FGSM and PGD attacks. In the case of black-box attacks, we do not have direct access to the model. The score-based black-box attacks expect meaningful scores such as probabilities (output from the softmax layer) or logits, which can be used to approximate the gradient and find the adversarial perturbation. For decision-based black-box attacks, only the final label returned by the model can be accessed by an adversary. In terms of defenses, we focus on adversarial training. Adversarial training combines the training process with the generation of adversarial examples. For each input x, we find an adversarial example that is then fed into the training process with the correct label y. Note that seven out of nine defense techniques accepted at ICLR 2018 were broken using white-box adaptive attacks. The most robust defense was the adversarial training. Many other defenses applied security by obscurity, e.g., via gradient masking or obfuscation. At the end of the lecture, we also present methods to provide robustness for self-supervised learning.

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: