Tutorial-45:Adam optimizer explained in detail | Simplified | Deep Learning |Telugu

Автор: Algorithm Avenue

Загружено: 2025-09-23

Просмотров: 543

Описание:

Connect with us on Social Media!

📸 Instagram: https://www.instagram.com/algorithm_a...

🧵 Threads: https://www.threads.net/@algorithm_av...

📘 Facebook: / algorithmavenue7

🎮 Discord: / discord

In this video, we break down Adam (Adaptive Moment Estimation) — the most widely used optimization algorithm in deep learning. 🚀

You’ll learn:

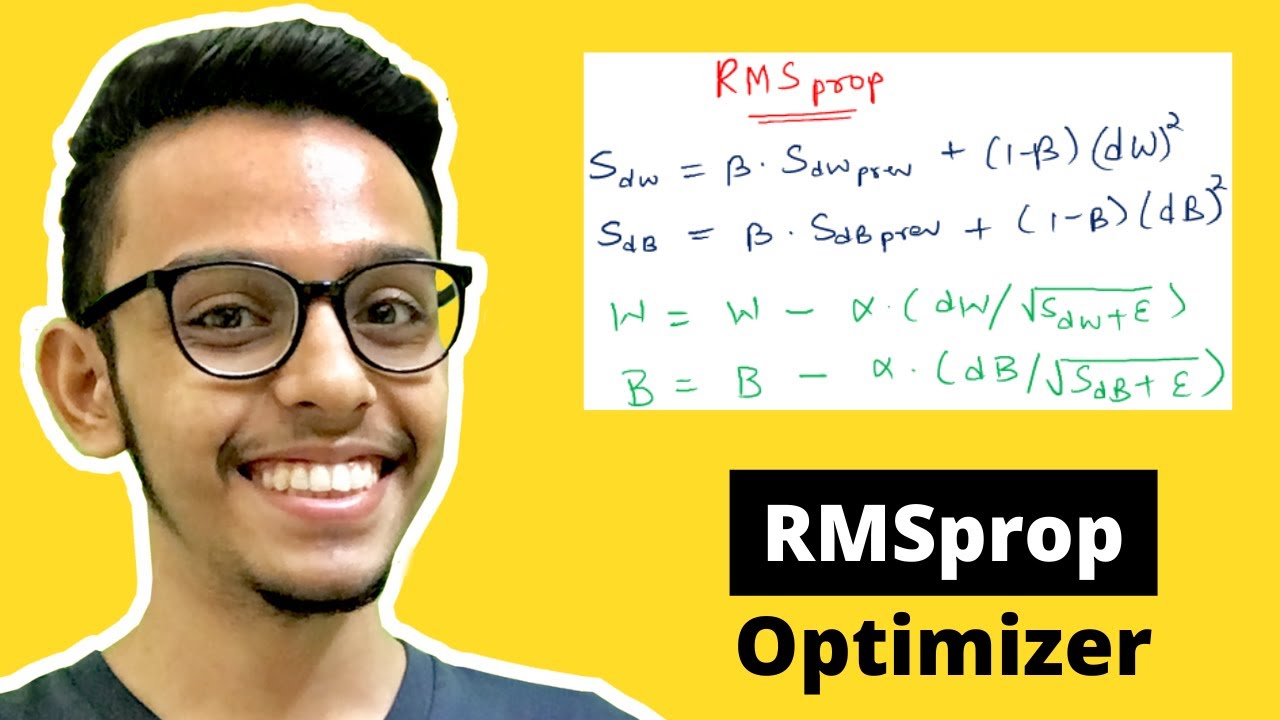

✅ Why Adam is preferred over RMSProp and SGD

✅ The intuition behind momentum (1st moment) and adaptive learning rates (2nd moment)

✅ The full update rule explained step by step

✅ The role of hyperparameters like lr, beta1, beta2, and eps

✅ Bias correction and why it’s important

✅ Practical examples of Adam in PyTorch

Whether you’re new to machine learning or brushing up on deep learning fundamentals, this tutorial will give you the complete picture of Adam Optimizer.

👉 If you found this useful, don’t forget to Like , Share , and Subscribe for more awesome content!

#adamoptimizer #adam #deeplearning #machinelearning #ai #artificialintelligence #neuralnetworks #gradientdescent #optimizationalgorithm #adaptiveoptimizers #pytorch #tensorflow #keras #backpropagation #aiexplained #deeplearningforbeginners #mlforbeginners #neuralnetworktraining #optimizersindeeplearning #adaptivelearningrate #sgd #rmsprop #adagrad #optimizerscomparison #aicommunity #airesearch #datascience #computervision #nlp #mlengineer

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: