NaturalSpeech2: A Multilingual Text-to-Speech Synthesis System

Автор: NeuralTalk

Загружено: 2023-05-20

Просмотров: 4051

Описание:

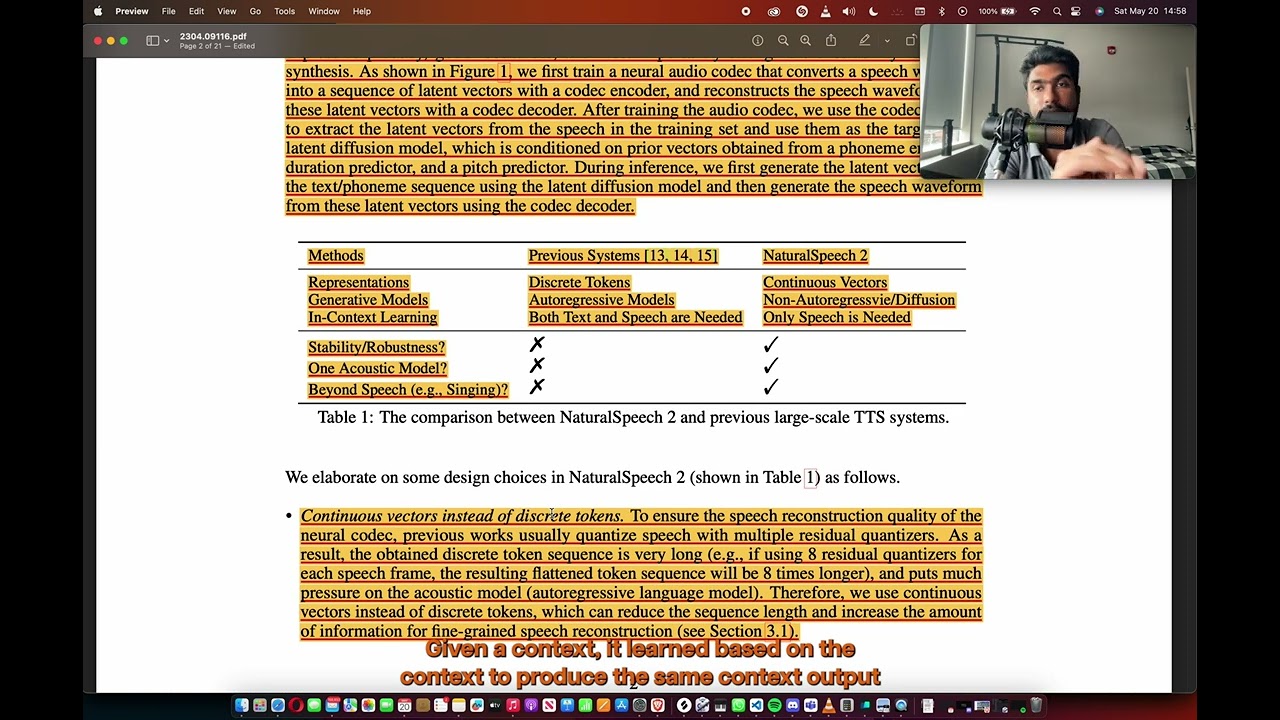

Welcome to this video on "NaturalSpeech2," a groundbreaking multilingual text-to-speech (TTS) synthesis system developed by the Speech Research team. In this video, we will explore the key features and advancements of NaturalSpeech2, a state-of-the-art TTS system that aims to provide highly realistic and natural-sounding synthesized speech in multiple languages.

NaturalSpeech2 is the result of extensive research and development, leveraging the latest advancements in deep learning and speech synthesis. This system has been designed to tackle the challenges of generating high-quality, human-like speech that captures the nuances and subtleties of natural language.

Throughout this video, we will delve into the core components and methodologies behind NaturalSpeech2. The paper we will be discussing, available at [link to the paper], provides an in-depth understanding of the system's architecture, training techniques, and evaluation results. It serves as a comprehensive guide for researchers and developers interested in advancing the field of TTS synthesis.

Some of the key highlights of NaturalSpeech2 include its ability to synthesize speech in multiple languages, with a focus on maintaining the prosody and intonation specific to each language. The system employs a deep neural network-based approach, utilizing techniques such as Tacotron and Transformer, to generate high-quality speech waveforms from input text.

The paper also highlights the importance of data collection and preprocessing in training a multilingual TTS system. NaturalSpeech2 utilizes a vast amount of multilingual and multi-style speech data, ensuring diversity and coverage across different languages, accents, and speaking styles. This broad dataset enables the system to generalize well and produce natural-sounding speech across various linguistic contexts.

Furthermore, the evaluation results demonstrate the superior performance of NaturalSpeech2 compared to previous TTS systems. Objective metrics such as mean opinion score (MOS) and naturalness ratings validate the system's ability to produce highly intelligible and natural speech across multiple languages.

Whether you're interested in the technical details of NaturalSpeech2 or simply fascinated by the advancements in speech synthesis, this video and the accompanying paper will provide you with a comprehensive overview of this cutting-edge TTS system. Join us as we explore the exciting world of NaturalSpeech2 and witness its potential in revolutionizing the way we interact with synthesized speech.

Remember to check out the full paper for a deep dive into NaturalSpeech2's architecture and techniques. Don't forget to like this video, subscribe to our channel, and leave your thoughts and questions in the comments section below. Enjoy the video!

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: