Transformer Model (1/2): Attention Layers

Автор: Shusen Wang

Загружено: 2021-04-16

Просмотров: 31294

Описание:

Next Video: • Transformer Model (2/2): Build a Deep Neur...

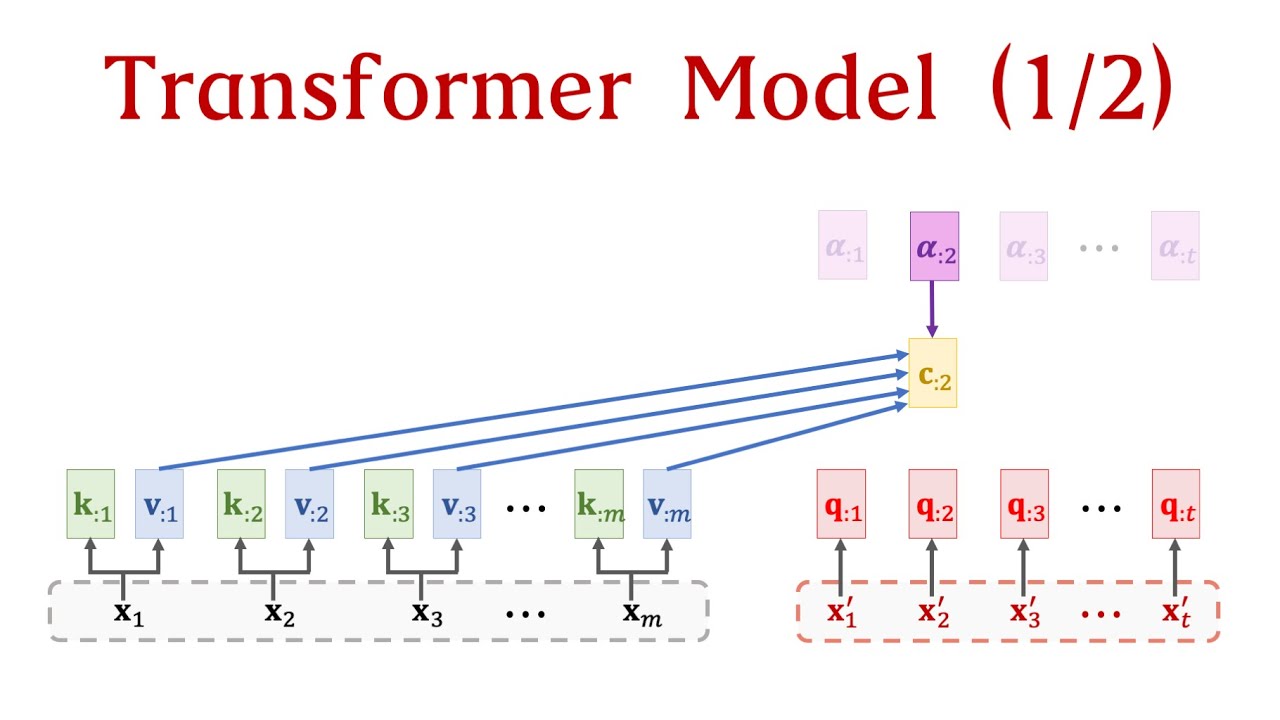

The Transformer models are state-of-the-art language models. They are based on attention and dense layers without RNN. Instead of studying every module of Transformer, let us try to build a Transformer model from scratch. In this lecture, we eliminate RNNs while keeping attentions. We will get an attention layer and a self-attention layer. In the next lecture, we use attention, self-attention, and dense layers to build a deep neural network which is known as Transformer.

Slides: https://github.com/wangshusen/DeepLea...

Reference:

Vaswani et al. Attention Is All You Need. In NIPS, 2017.

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: