Vision-Tactile Sensor — Intelligent Perception for the Next Generation of Robotics

Автор: Connecting Innovations

Загружено: 2025-11-08

Просмотров: 36

Описание:

VT10-G Vision-Tactile Sensor — Intelligent Perception for the Next Generation of Robotics

1. Introduction

The VT10-G Vision-Tactile Sensor represents a new milestone in robotic perception technology.

Unveiled at the 2025 Shanghai Industrial Expo, it integrates visual sensing and tactile feedback into a single compact module, allowing robots to see and feel with unprecedented precision.

By merging optical imaging with force and pressure data, the VT10-G empowers robotic systems to perform complex manipulation tasks — such as object recognition, grasp stability assessment, slip detection, and adaptive control — with a level of sensitivity approaching the human hand.

This innovation is ideal for industrial automation, precision assembly, smart manufacturing, service robotics, and medical applications.

2. Core Concept

Traditional industrial robots rely mainly on visual sensors (like cameras) for positioning and identification. However, vision alone cannot detect subtle mechanical interactions such as surface texture, softness, or micro-slip.

The VT10-G combines both vision and tactile perception, fusing data from optical and pressure domains to create a detailed three-dimensional understanding of contact interactions.

In essence, the sensor not only sees the object but also feels how it responds, enabling adaptive decision-making under dynamic and uncertain conditions.

3. Working Principle

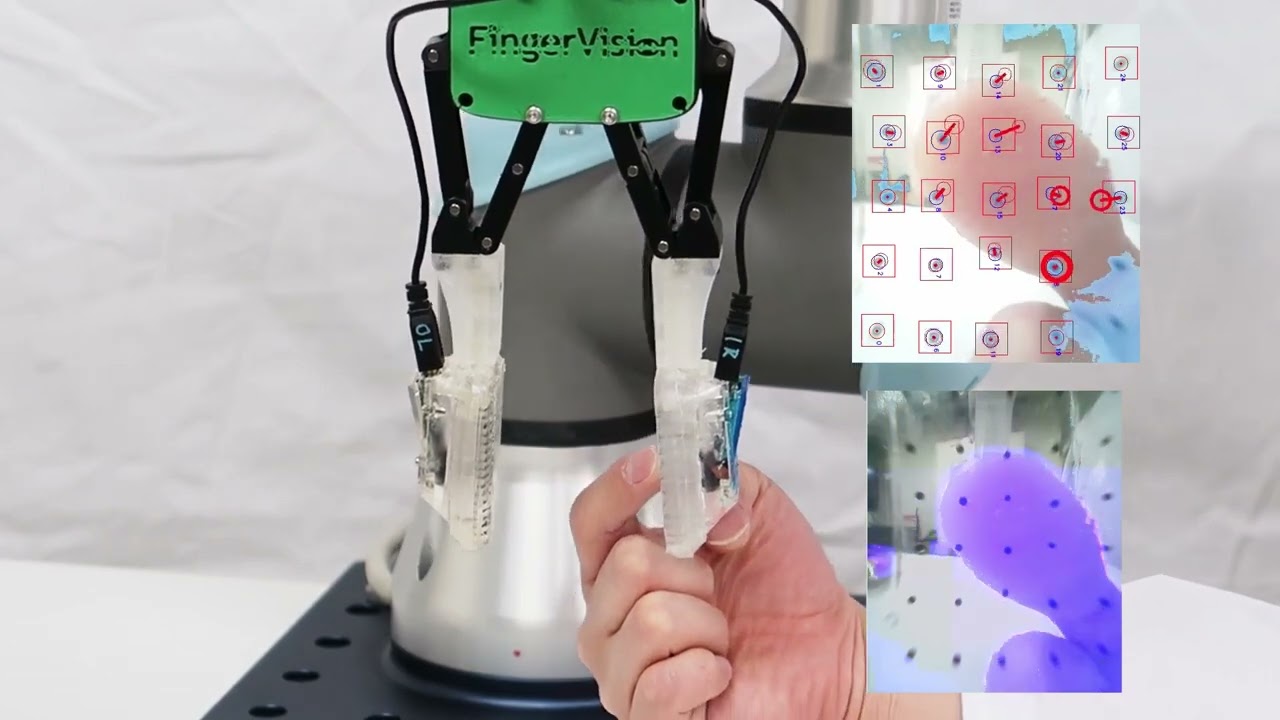

a. Vision Subsystem

At its core, the VT10-G incorporates a high-resolution embedded camera with a wide field of view. The optical lens observes the deformation pattern of a soft, transparent elastomer surface illuminated by an internal LED array.

As the robot’s gripper or fingertip touches an object, the surface deforms slightly. The camera captures this deformation in real time, generating high-precision data about:

Contact geometry

Surface curvature and texture

Slip direction and friction coefficient

Advanced onboard AI algorithms convert this optical deformation pattern into quantitative tactile maps.

b. Tactile Subsystem

Integrated MEMS pressure and force sensors are embedded beneath the optical elastomer.

These measure the normal and tangential forces exerted during interaction, providing a continuous feedback loop for grip control.

This dual-sensing design allows the robot to adjust its grasp force autonomously — tightening when slip is detected, or relaxing when excessive pressure risks damaging the object.

c. Fusion Algorithm

The VT10-G’s embedded processor runs sensor fusion algorithms combining optical and pressure data in real time.

By correlating visual deformation with force readings, it constructs a complete 3D model of contact behavior — making it possible for robots to “understand” how objects react under touch.

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: