Rayan Saab, Quantization for Compressing Neural Networks: Theory and (New) Algorithms, 2025.10.14

Автор: CodEx Seminar

Загружено: 2026-01-24

Просмотров: 27

Описание:

Speaker: Rayan Saab (University of California San Diego)

Title: Quantization for Compressing Neural Networks: Theory and (New) Algorithms

Date: 2025.10.14

Abstract:

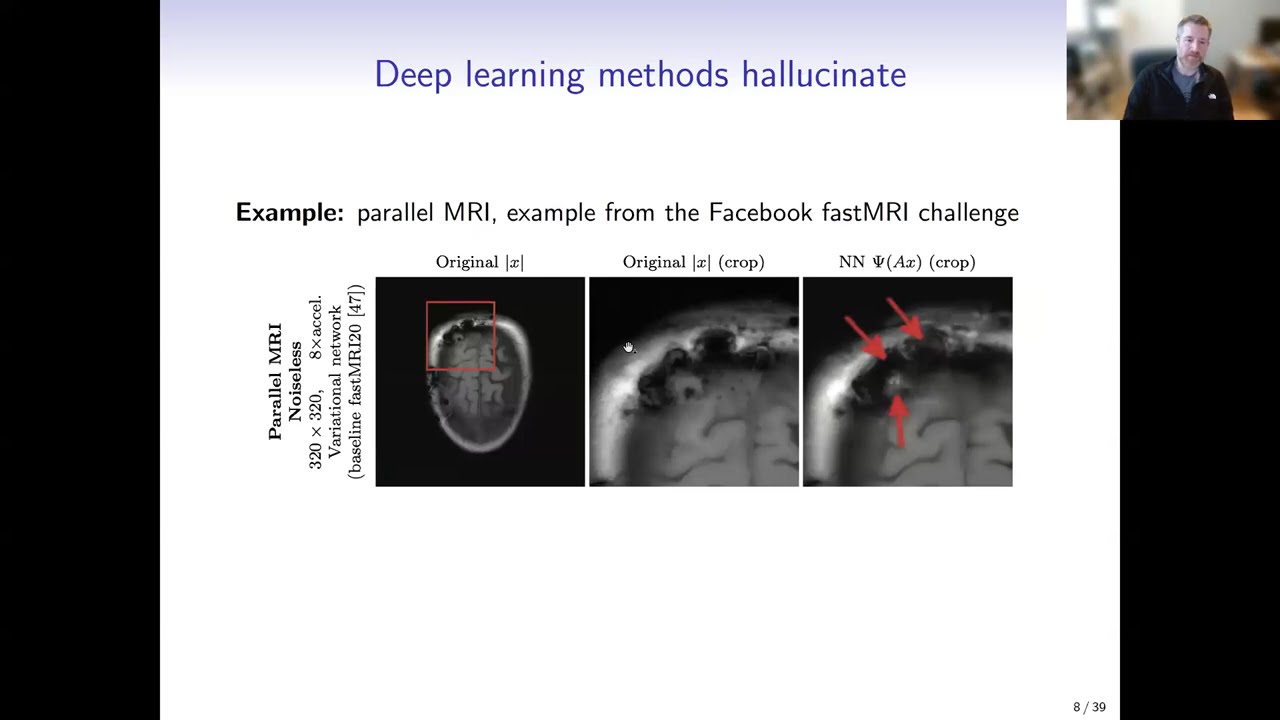

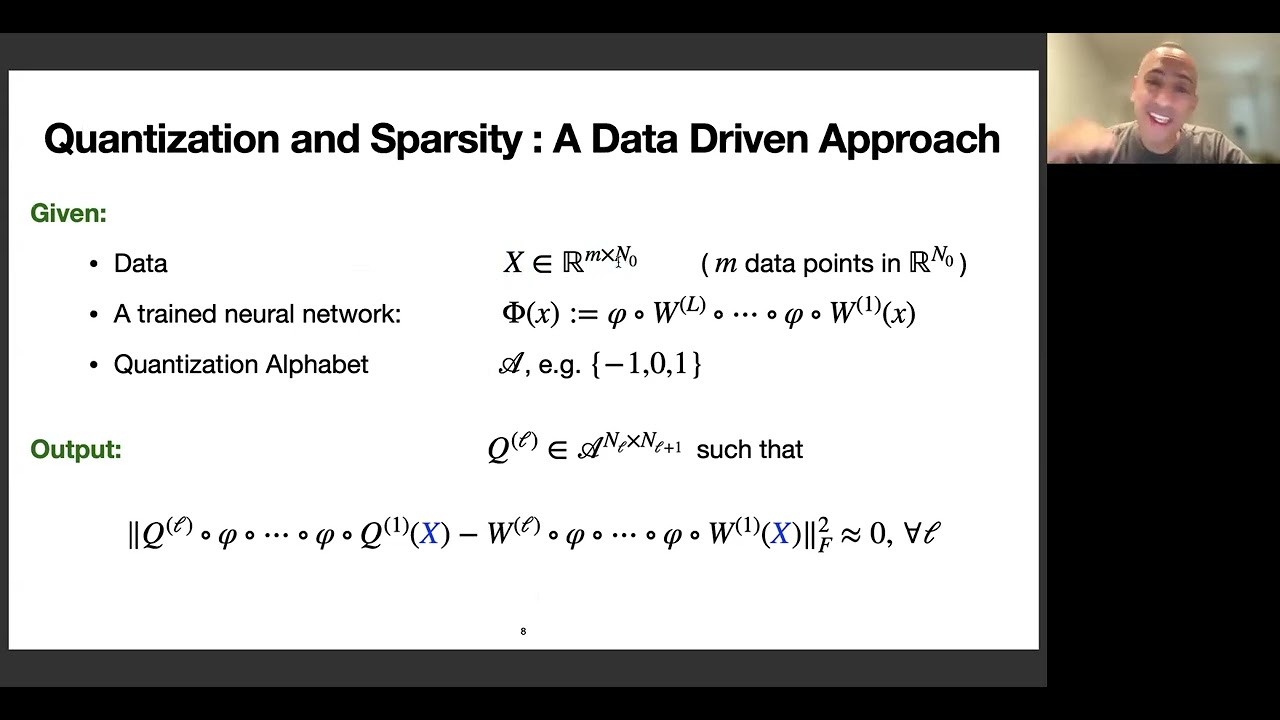

Quantization compresses neural networks by representing weights and activations with few bits, reducing memory, computation time, and energy while preserving inference accuracy. However, the underlying optimization problems are NP-hard in general. So, one must settle for computationally efficient approximate solutions, ideally ones with theoretical error guarantees.

We analyze OPTQ, a widely used quantization algorithm in the literature. We provide new theory: an error-evolution identity, layerwise error bounds, and theoretical justification for heuristics used in practice, including for feature ordering, regularization, and alphabet size. We further study a stochastic variant that yields entrywise control on the error. With these results in hand we introduce Qronos, a new related algorithm that first corrects errors resulting from previous layers, and thus attains stronger guarantees . We conclude with numerical results on modern language models.

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: