CPU LLM #4: The DNA of LLMs - How Matrix Multiplication Optimization Delivers 6x Performance Gains

Автор: ANTSHIV ROBOTICS

Загружено: 2025-07-18

Просмотров: 566

Описание:

🚀 Ever wondered how large language models (LLMs) can run efficiently on CPUs? It all comes down to optimizing the "DNA" of AI: General Matrix Multiplication (GEMM) kernels!

In this video, we take you on a deep dive into the world of CPU-optimized LLM runtimes, built from scratch in pure C. We explore how highly optimized GEMM (General Matrix Multiply) kernels are the fundamental building blocks for modern AI inference and training, driving massive performance gains.

What you'll learn:

The Importance of GEMM: Understand why C=alphaAB+betaC is the workhorse behind neural networks, including linear layers, attention mechanisms, and convolutional layers.

Memory Layout Matters: Discover how smart memory allocation and avoiding costly transposes are crucial for CPU performance.

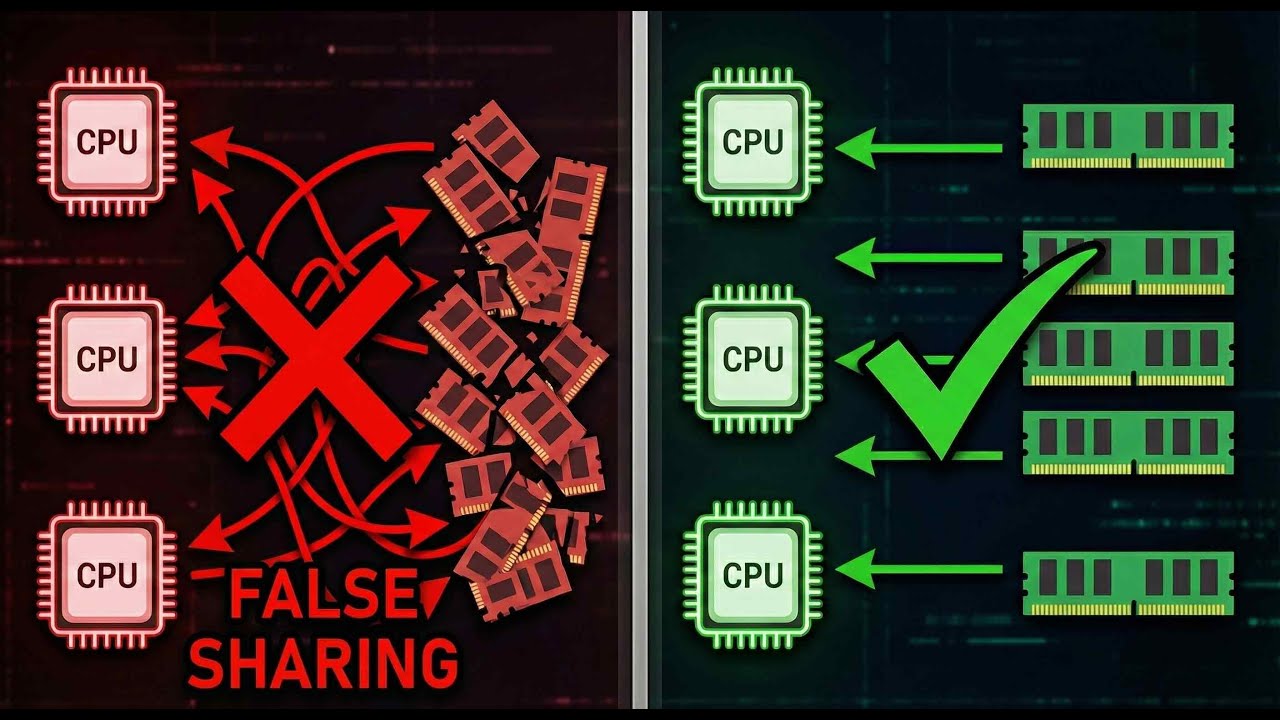

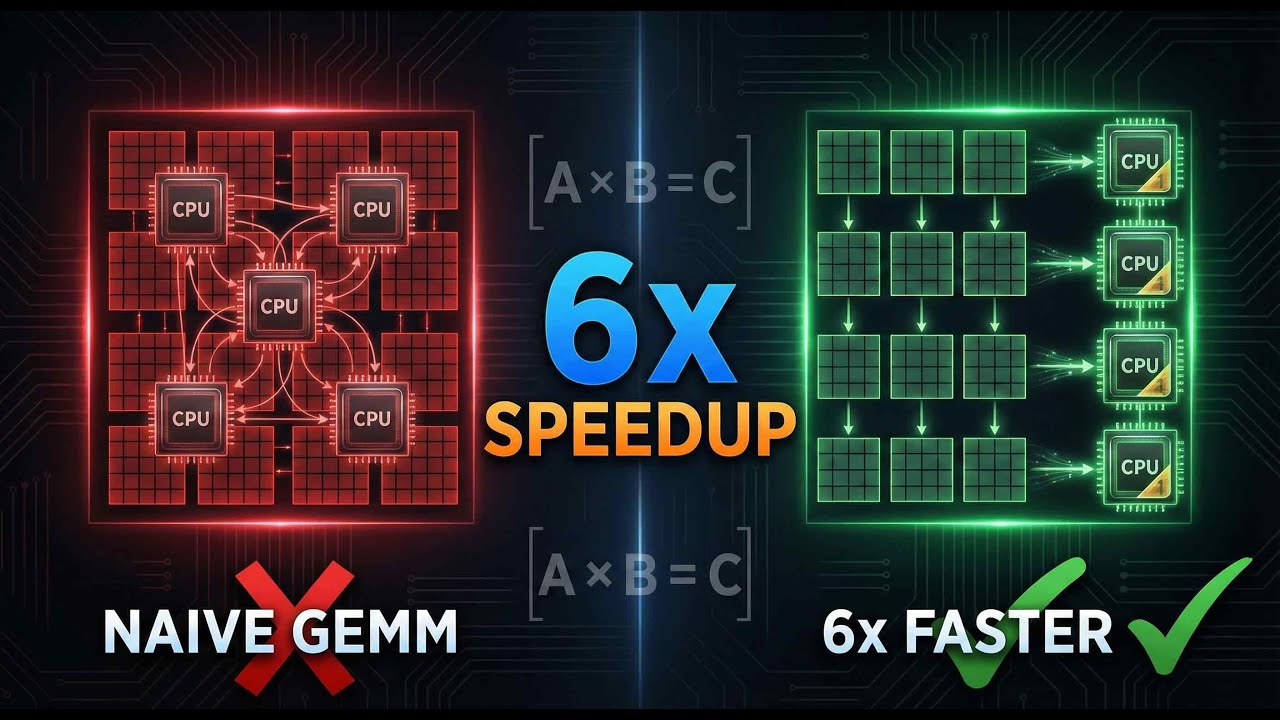

Four Levels of Optimization: We break down the engineering of distinct GEMM kernels:

Naive Parallel GEMM: Our baseline with basic triple-loop implementation and OpenMP.

Simple AVX-512 Parallel GEMM: Introducing Intel AVX-512 intrinsics for significant vectorization speedup.

Fine-Grained Blocked GEMM: Combining AVX-512 with cache blocking (64x64 blocks) to improve data locality and cache utilization.

Token-Parallel Orchestration: Our key innovation! This higher-level strategy distributes input tokens across multiple CPU cores, each executing a serial blocked GEMM for maximum CPU utilization and near-perfect scaling.

Real-World Performance: See the significant speedups achieved, with Token-Parallel Orchestration delivering over 6x performance gain compared to the Naive approach for both MLP and QKV GEMM operations.

The Bigger Vision: Learn how this GEMM work is the foundational Phase 1 of building a complete CPU-native AI runtime, with future plans for a full forward pass, backward pass, optimizer kernels, and even mixed-precision training. Our ultimate vision is to democratize AI by making high-performance inference accessible on any CPU.

This project emphasizes a comprehensive benchmarking approach to guide kernel selection and ensure numerical stability.

Codebase Highlights:

The accompanying C codebase demonstrates these optimizations, featuring:

Optimal memory layout with 64-byte alignment and 2MB Huge Pages for zero fragmentation.

Hardware-aware optimization leveraging AVX-512 intrinsics.

An integrated benchmarking framework for transparent and reproducible results.

Watch now to understand the "DNA of AI" and how it's being optimized for the CPU!

You can join our discord channel here:

/ discord

** Open Source Repositories in github **

The github repository to access the Drone code:

► https://github.com/antshiv/BLEDroneCo...

The handheld controller code:

]

► https://github.com/antshiv/BLEHandhel...

The github repository to access the thrust stand files:

► https://github.com/antshiv/ThrustStand

*** MCU Development Environment:

► NXP Microcontrollers- McuXpresso

► Microchip Microcontrollers including Arduino- Microchip Studio

► Linux + VI + ARM GCC

Linux Environment:

► VirtualBox + Linux Mint

► Window Manager - Awesome WM

Electronic Tools I use:

► Oscilloscope Siglent SDS1104X-E - https://amzn.to/3nRcziY

► Power source - Yihua YH-605D

► Preheater Hotplate - Youyue946c - https://amzn.to/356DhgS

► Soldering Station - Yihua 937D - https://amzn.to/33VXm9b

► Hot Air gun - Sparkfun 303d

► Logic Analyzer - Salae - https://amzn.to/3AoQ4qy

► Third hand - PCBite Kit - https://amzn.to/3JCYZbr

► Solder fume Extractor - https://amzn.to/3H2a0kE

► Microscope - https://amzn.to/3vQXz9d

Software Tools I use:

► PCB Design - Altium

► Mechanical Part modelling - Solidworks

► 3d Modelling and design prototyping - 3ds Max

► Rendering Engine - VRay

► Mathematical Modelling and model based design - MATLAB and Simulink

Links:

► Website: https://www.antshiv.com

► Blog: https://shivasnotes.com

► Patreon page: / antshiv_robotics

DISCLAIMERS:

We are a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for us to earn fees by linking to Amazon.com and affiliated sites.

This video was not paid for by outside persons or manufacturers.

No gear was supplied to me for this video.

The content of this video and my opinions were not reviewed or paid for by any outside persons.

Повторяем попытку...

Доступные форматы для скачивания:

Скачать видео

-

Информация по загрузке: